A free web scraper is a tool that automatically extracts data from websites at no cost: pulling text, prices, contacts, or any structured content into a spreadsheet or database without manual copy-pasting.

Finding a free web scraper that doesn’t cut you off after 200 pages or quietly expire after 7 days takes longer than the scraping job itself.

We tested 12 tools in 2026 and recorded exactly what each free tier gives you before the limits kick in. No vague claims. Just the actual caps, the real tradeoffs, and the one thing you only discover after actually using each tool. Let’s dive deeper!

Quick Answer: Best Free Web Scrapers in 2026

| Tool | Best For | No-Code | Free Tier Limit | Rating |

| Octoparse | All-round, no-code scraping | ✅ | 10 tasks; Up to 10K data per export; 50K data export per month; Unlimited pages per run | G2: 4.8/5 |

| ParseHub | JS-heavy and complex sites | ✅ | 5 projects, 200 pages/run | G2: 4.3/5 |

| ScrapingBot | E-commerce and product APIs | ❌ | 100 credits/month | N/A (developer API) |

| WebScraper.io | Multi-page site mapping | ✅ | Extension free; cloud paid | G2: 4.4/5 |

| Data Scraper | Quick table and list extraction | ✅ | 500 pages/month | Chrome Web Store: 4.3/5 |

| Scraper (Chrome) | XPath power users | ⚠️ Partial | Unlimited | Chrome Web Store: 4.1/5 |

| Chat4Data | AI natural-language scraping | ✅ | 1M tokens | Chrome Web Store: 4.6/5 |

| Apify | Pre-built actor marketplace | ✅ / ❌ | $5 monthly credits | G2: 4.7/5 |

| Scrapy / BeautifulSoup / Playwright / Puppeteer | Python & Node.js open-source libraries | ❌ | Open source | GitHub: 61k–94k stars |

What Does “Free” Actually Mean for Web Scrapers?

Before picking a tool, it helps to understand what “free” looks like in practice. In practice, there are usually three models:

- Truly unlimited. Open-source libraries like Scrapy and Playwright carry no usage caps. You cover your own server costs, nothing else.

- Free tier with hard caps. Most GUI tools offer a permanent free plan with real limits: typically 500 pages per month, 5 projects, or a monthly credit allowance. These work well for research and one-off jobs, but not for production pipelines.

- Free trial only. Some tools give full access for a fixed period, then require payment. These are not included in this list.

Every entry in this article lists the exact free tier limit so you can match it to your workload before committing.

For a broader look at web scraping and use cases, see our dedicated guides:

1. What is web scraping and how it works

2. Top 15 Most Scraped Websites in 2026

How We Evaluated These Tools

We ran each tool against a standard set of tasks: scraping a paginated e-commerce listing page, extracting a directory table, and navigating a JavaScript-rendered site. We cross-referenced user feedback from G2, Capterra, and Chrome Web Store reviews. Rating scores and review counts are pulled directly from those platforms and were last verified in April 2026. We hope you can find your top pick according to different scenarios and needs after reading this article!

12 Best Free Web Scraping Tools in 2026

No-Code Desktop and Cloud Scrapers

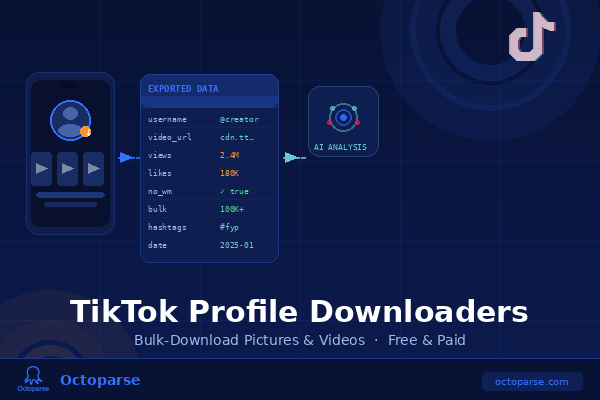

Octoparse: Best Free Web Scraper for Non-Coders

| Type | Desktop + Cloud |

| Platform | Windows, Mac, Cloud |

| Free tier | 10 tasks; Up to 10K data per export; 50K data export per month; Unlimited pages per run |

| Export | CSV, Excel, JSON, Google Sheets, databases |

| Rating | G2: 4.8/5, Capterra:4.7/5 |

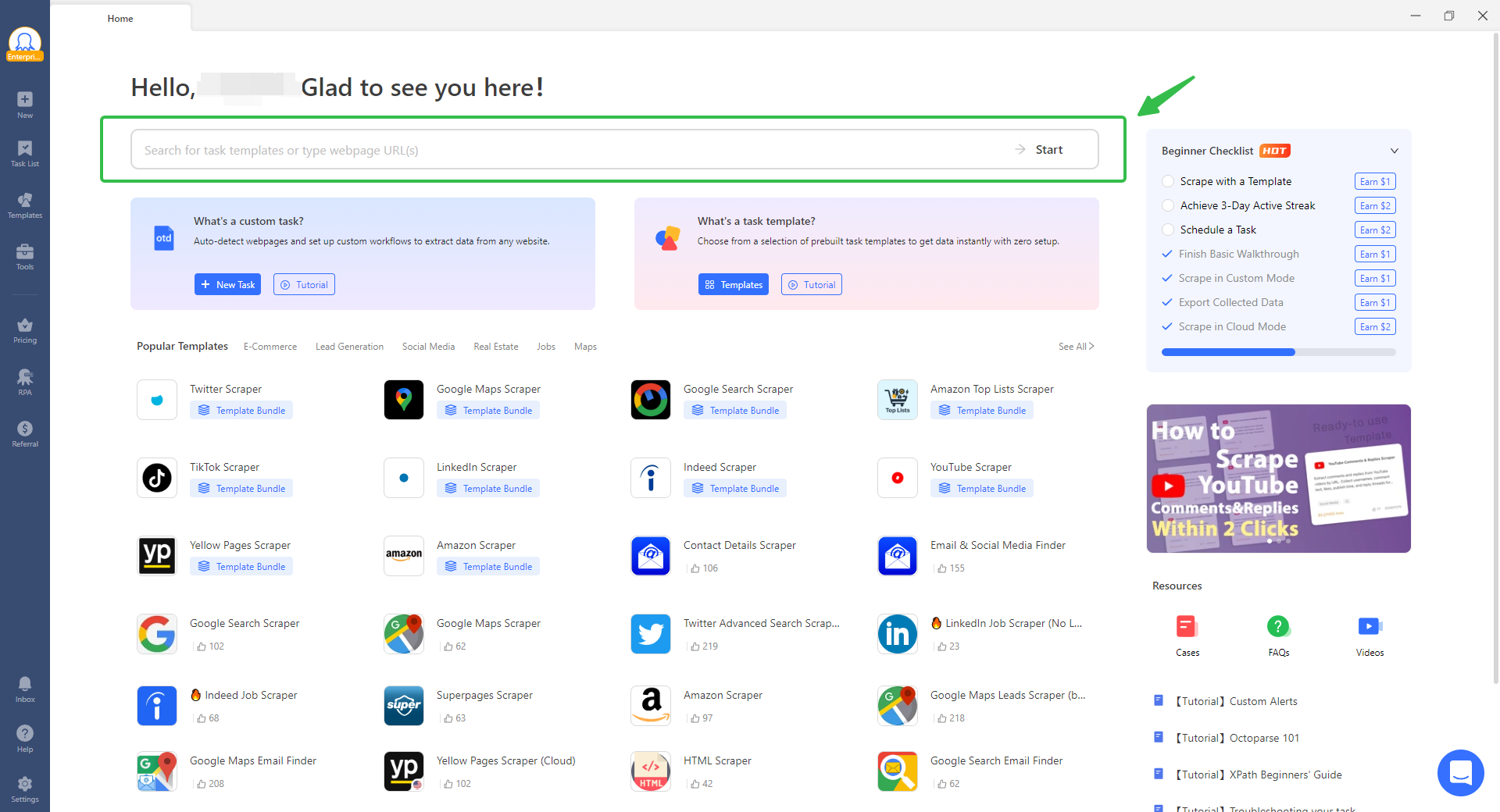

Octoparse is a visual, point-and-click scraping tool designed for users who need structured data without writing code. The AI auto-detection feature scans any open page and identifies data fields, pagination patterns, and site structure automatically.

Key features:

- AI Auto-Detection. Opens any page and configures the extraction fields in under 30 seconds on most standard sites. In our own testing against an e-commerce category page with 48 products across 3 pages, auto-detection picked up the correct fields (name, price, rating, URL) on the first pass and handled pagination without manual configuration.

- 600+ pre-built templates. Ready-to-run scrapers covering social media, lead generation, real estate, e-commerce, jobs, Google Maps and more scenarios. Many are free to use. Download Octoparse, search for the template you want, enter the parameters as directed, and data arrives in minutes. Or you can click the link below to have a try.

https://www.octoparse.com/template/contact-details-scraper

- Handles complex pages. Pagination, infinite scroll, AJAX-loaded content, detail page extraction, and login walls are all managed through the visual workflow builder without code.

- Broad export options. Unlike most free tools that stop at CSV, Octoparse pushes directly to MySQL, SQL Server, PostgreSQL, and Google Sheets on applicable plans.

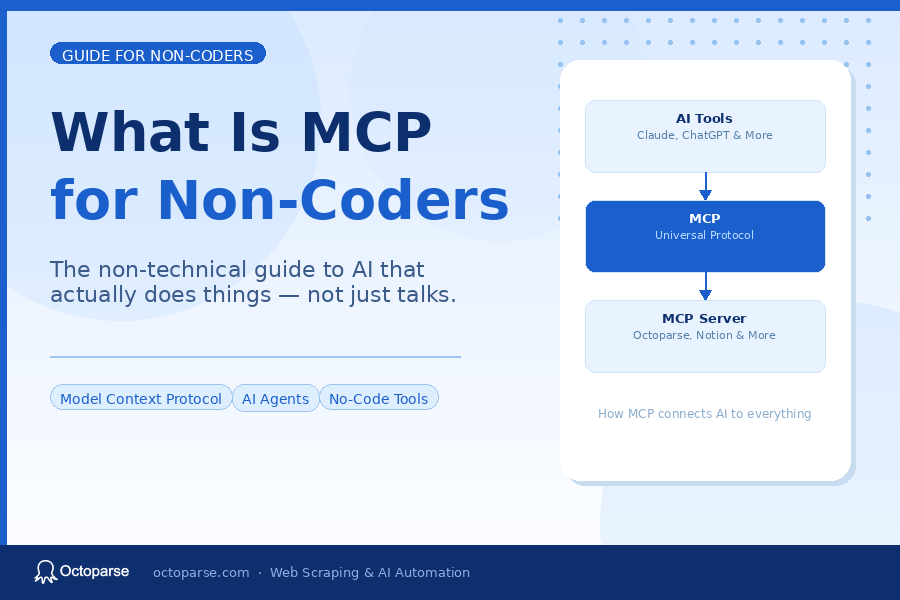

- AI connector to live web data. It is also a web scraping MCP server that allows you to do scraping with AI clients like Claude, ChatGPT, Gemini and more, and then directly let AI do research for you based on exported data.

- More Advanced Features with Cost-effective Plans. You can also try advanced features like cloud scraping, task scheduling, data export API, automatic CAPTCHA solving and in-built proxy rotation with considerably cost-effective plans.

What real users say:G2 reviewers (4.8/5) consistently highlight time savings from AI auto-detection and the template library. A Data Engineering Team Lead who has used Octoparse since 2019, described it on Capterra as “very efficient” at handling complex scraping challenges including JavaScript, AJAX, scrolling, iframes, and API scraping.

Limitation: Heavily Cloudflare-protected sites may require a paid plan’s dedicated proxy support.

📑Our take: For the most common scraping jobs like Amazon listings, Google Maps, LinkedIn profiles, the template library gets you to working data in under 10 minutes without touching a single selector. If you have urgent tasks, it’s the fastest way out to try them.

ParseHub: Best for Complex and JavaScript-Heavy Sites

| Type | Desktop |

| Platform | Windows, Mac, Linux |

| Free tier | 5 public projects, 200 pages/run |

| Export | JSON, CSV |

| Rating | G2: 4.3/5, Capterra: 4.5/5 |

ParseHub uses machine learning to extract data from pages built with AJAX, JavaScript, and cookies. You navigate to a target page in the ParseHub browser, click on the elements you want, and it learns the extraction pattern across the entire site.

Key features: Visual click-based interface, handles JavaScript and login-gated pages, regex and XPath support for advanced configurations, cross-platform native apps for Windows, Mac, and Linux.

What real users say: Reviewers on G2 and Capterra consistently praise ParseHub for tackling JavaScript-heavy sites that simpler tools cannot reach. One Capterra reviewer, a real estate marketer, extracted over 10,000 leads from property directories on the free plan, though initial setup required about two focused hours. What is worth noting is that, on Trustpilot, where less technical users tend to write reviews, the rating drops to 2.9/5, which is a gap that reflects the tool’s complexity more than its quality.

Limitation: The 5-project cap restricts parallel workflows. Free plan jobs run at lower priority on ParseHub’s servers, meaning longer wait times during peak hours. Paid plans start at $189/month as of early 2026. IP rotation is a paid-only feature; some users find they still need a third-party proxy on top for the most aggressive anti-bot targets.

📑Our take: ParseHub has a specific non-obvious trap: after every click that changes the page (like a pagination button), you have to explicitly define whether it is a page change. Miss this step and the scraper silently stops or loops without an error. It is the single most common cause of a broken first scraper. Once you understand how the parent-child selector tree works, it handles nearly any site. But there is no shortcut around the 2-hour orientation period. If you are on Linux and need a no-code desktop scraper, ParseHub is one of very few options available.

Cloud Platforms and APIs

ScrapingBot: Best for E-Commerce Product APIs

| Type | API |

| Platform | Cloud |

| Free tier | 100 credits/month |

| Export | JSON, HTML |

| Rating | N/A (developer API, no major review platform) |

ScrapingBot is an API-based scraper designed for developers who need clean product data without managing their own infrastructure. It specializes in scraping the data of e-commerce pages like product title, price, description, image, stock and extracted automatically through a single API call.

Key features: Specialized endpoints for real estate, Google search results, and social networks; live URL testing dashboard; 100 free credits monthly for testing.

Limitation: API-only. Requires basic technical ability. 100 free credits cover roughly 100 simple page requests — a proof-of-concept allowance, not a working free plan. Paid plans start at $43/month.

📑Our take. Use the 100 free credits to confirm the API handles your target site’s structure cleanly before committing to a paid plan. One practical note is that the real-estate endpoint is noticeably slower than the generic scraper. You can factor this into timeout settings in your code, or requests will appear to fail when they are simply still processing.

Apify: Best Pre-Built Scraper Marketplace

| Type | Cloud platform (Actor marketplace) |

| Platform | Cloud, any OS |

| Free tier | $5/month in platform credits |

| Export | JSON, CSV, Excel, Google Sheets, databases |

| Rating | G2: 4.7/5 (432 reviews) |

Apify is a full-stack cloud platform for web scraping and automation. Rather than a single scraper, it offers a marketplace of pre-built “Actors” , ready-to-run scrapers for specific platforms. You can also build custom Actors in Python or JavaScript.

Key features: Actor marketplace with pre-built scrapers, cloud execution with scheduling, Playwright and Puppeteer integration, proxy management, and integrations with Zapier, Make, n8n, and major databases.

What real users say. Based on G2 reviews, Apify holds 4.7/5. But “Pricing Issues” (88 mentions) and “Expensive” (87 mentions) are the two most common negative tags, per G2’s own topic analysis. The standout praise is the Actor marketplace: reviewers consistently describe going from zero to a working scraper in under an hour. A Capterra reviewer put it bluntly: “The primary drawback is the steep learning curve for non-developers and the credit-based pricing model, which can be confusing and lead to unexpected costs when scaling.”

Limitation. Community-built Actors vary in quality and maintenance. Unused monthly credits do not roll over. Some Actors layer per-result fees on top of platform compute units. proxy costs alone can exceed compute costs at moderate volume. See full pricing breakdown before committing to any Actor at scale.

📑Our take: The non-obvious cost trap is that Apify stacks compute units, proxy bandwidth, storage, and Actor rental charges independently. Before running any Actor at volume, open its dedicated Pricing tab, not the general platform pricing page, to check for per-result fees. A safer pattern is to run a 10–20 record sample first, inspect the Run usage panel, then project to full volume. The $5 free tier confirms an Actor works for your target; it is not a monthly working budget.

Free Web Scraper Chrome Extensions

WebScraper.io: Best for Multi-Page Site Mapping

| Type | Chrome Extension + Cloud |

| Platform | Chrome, Cloud |

| Free tier | Browser extension fully free; cloud scraping is paid |

| Export | CSV, XLSX, JSON |

| Rating | G2: 4.4/5, Trustpilot: 4.2/5, Chrome Web Store: 4.0/5 |

WebScraper.io is the most widely installed web scraping extension in the Chrome Web Store. It works inside Chrome’s DevTools panel, where you build a “sitemap”, a structured plan of how the scraper should navigate a site and which data to collect at each level.

Key features: Point-and-click sitemap builder, full JavaScript execution, pagination and infinite scroll support, cloud extension with scheduling and integrations for larger jobs.

What real users say. Amomng Trustpilot reviewers, many of them long-term users of 2 or more years. They consistently describe the tool as reliable and the support team as unusually hands-on. One reviewer described a decade of use across a master’s thesis, event scraping, and job market research without switching tools. However, ther is a recurring friction in G2 reviews: beginners often get empty results on their first attempt because they missed a selector type or hierarchy step, and debugging feels more like coding than expected.

Limitation: The free extension runs locally with no scheduling and no IP rotation. Upgrading to cloud removes both restrictions but adds cost.

📑Our take: WebScraper.io has a trap beginners miss entirely. If you want to scrape detail pages (clicking into each product listing to get more data), those selectors must be nested inside a Link selector, not added at the root level. Many first-time users add all selectors at the root and wonder why they only get data from the first page. Nesting is the key. Watch one full multi-level tutorial before you start; it cuts the learning time in half.

For more extension comparisons, see our best free web scraper Chrome extensions guide.

Data Scraper: Best for Quick Table and List Extraction

| Type | Chrome Extension |

| Platform | Chrome |

| Free tier | 500 pages/month |

| Export | CSV, XLS |

| Rating | Chrome Web Store: 4.3/5 |

Data Scraper extracts table and list-format data from a single page in a few clicks. Select any element and it auto-detects the repeating pattern, pulling every matching item, no sitemap or configuration required.

What real users say: Users describe it as the fastest zero-setup option for pulling structured tables like extracting price lists, directories, product listings out of a page. But here is a recurring limitation in reviews: nested or non-tabular layouts frequently require switching to a more capable tool.

Limitation: Works best on structured, table-format data. Free plan caps at 500 pages per month.

📑Our take: Data Scraper handles a table, directory, or product list in under two minutes with no configuration. For anything more complex or recurring, move to WebScraper.io or Octoparse. One hidden gotcha is that auto-detection sometimes grabs table headers as data rows. So it is advisable to scroll through the preview before exporting to catch duplicated column names in your output.

Scraper (Chrome): Best for XPath Power Users

| Type | Chrome Extension |

| Platform | Chrome |

| Free tier | Unlimited |

| Export | Google Sheets |

| Rating | Chrome Web Store: 4.1/5 |

Scraper is a minimal extension for users comfortable with XPath or jQuery selectors. Right-click selected text, choose “Scrape Similar,” and it extracts all matching content from the page. Custom XPath columns extend what gets captured.

Limitation. Intermediate to advanced users only. Without XPath knowledge, extracting useful output is harder than with the other extensions in this list.

📑Our take. If you know XPath and want a zero-setup scraper that feeds directly into Google Sheets, Scraper is a practical option. The right-click workflow is the fastest path from “I see the data” to “I have the data” for a single-page extraction job. Not the right starting point for beginners.

Chat4Data: Best Free AI Web Scraper for Non-Coders

| Type | Chrome Extension (AI-powered) |

| Platform | Chrome |

| Free tier | 1 million tokens for new users, then $1/million tokens |

| Export | Excel, CSV, JSON |

| Rating | Chrome Web Store: 4.6/5, Product Hunt: #1 Product of the Day |

Chat4Data is an AI-powered Chrome extension that takes a fundamentally different approach from every other tool in this list. Instead of pointing and clicking on page elements, you describe what you want in plain English, and the AI handles navigation, field detection, pagination, and export on its own.

How it works: Type a task like “Open Amazon, search for Lego, sort by best sellers, and collect product name, brand, rating, and price from the first 3 pages.” Chat4Data shows you a step-by-step execution plan before running anything. Review it, adjust if needed, confirm, and data arrives in Excel, CSV, or JSON.

Key features:

- Execution plan preview. The scraper maps out every navigation step before consuming a single token. No other free tool in this category offers this level of pre-run transparency.

- Task reuse. Configure once, save, and re-run anytime. Saved tasks skip the AI analysis step, making ongoing monitoring significantly cheaper than tools that re-analyze on every run.

- Privacy-first design. All scraping runs locally in your browser. No data passes through Chat4Data’s servers.

- Handles complex pages. Pagination, infinite scroll, detail page extraction, and human-like browsing behavior to reduce bot detection triggers.

- Powered by multiple LLMs. Gemini, Claude, and other models handle natural language understanding.

What real users say: Reviewers on the Chrome Web Store and Product Hunt consistently highlight the near-zero learning curve. Users working with product data, lead lists, and contact directories report it as a practical time-saver for non-technical workflows. The most common friction points is that initial task configuration takes about 2 minutes while the AI analyzes the page.

Limitation. Not designed for industrial-scale extraction. Very large scrapes are slower than no-code tools like Octoparse .

📑Our take: The 1 million free tokens sounds like a lot and it is for casual use. In practice, a complex multi-page scrape with detailed field instructions consumes roughly 8,000–15,000 tokens per run, so the free allocation covers 65–125 full tasks before the meter starts. For recurring jobs, use the task reuse feature: saved tasks skip the AI analysis step and use far fewer tokens on subsequent runs.

For Developers: Free Open-Source Libraries

If you write code, these libraries have no usage caps, no credit limits, and no vendor lock-in.

| Library | Language | Best For | Community Signal | Free? |

| Scrapy | Python | Large-scale crawlers with data pipelines | 60k+ GitHub stars | Open source (BSD) |

| BeautifulSoup | Python | HTML parsing, simple one-off tasks | Widely used in Python ecosystem | Open source (MIT) |

| Playwright | Python / Node.js / Java | JS-heavy sites, SPAs, multi-browser | 80k+ GitHub stars | Open source (Apache 2.0) |

| Puppeteer | Node.js | Headless Chrome automation | 90k+ GitHub stars | Open source (Apache 2.0) |

Limitation: These libraries handle extraction logic only. Proxy rotation, scheduling, storage, and anti-detection are your responsibilities. When a target site changes its layout, your scraper breaks and you fix it.

📑Our take: Scrapy (60k+ GitHub stars, actively maintained by Zyte) is the industry standard for production crawlers since it is asynchronous, extensible, and battle-tested. BeautifulSoup is the fastest way to parse a static page in 20 lines of code. For JavaScript-heavy sites in 2026, Playwright is the stronger choice over Puppeteer: active cross-browser support across Chromium, Firefox, and WebKit, better async handling, and a more active contributor community. One practical tip: Scrapy’s default download delay is 0. Set “DOWNLOAD_DELAY = 1” in your settings.py file from the start, or your scraper will hammer target sites and trigger bans faster than you expect.

For a hands-on guide, see our Python web scraping tutorial.

Which Free Web Scraper Should You Use?

| Your Situation | Best Tool | Why |

| No coding skills, want results fast | Octoparse | AI auto-detection and 600+ templates; sufficient free tasks |

| Complex JavaScript site, no code | Octoparse or ParseHub | Both handle AJAX, infinite scroll, and login walls visually |

| Browser extension, zero install | Data Scraper or Chat4Data | No download; works inside Chrome |

| Prefer to describe data in plain English | Chat4Data | Natural language input with execution plan preview |

| Scraping tables or price lists one-off | Data Scraper | Fastest setup for structured table data |

| Multi-page site with complex navigation, from browser | WebScraper.io | Sitemap builder handles hierarchical navigation |

| Python developer, large-scale project | Scrapy | Asynchronous, extensible, 61k+ star community |

| JavaScript-heavy site, have code skills | Playwright | Best multi-browser headless automation in 2026 |

| E-commerce product data via API | ScrapingBot | Pre-built product and retail endpoints |

| Scraping on Linux without code | ParseHub | One of very few GUI scrapers with native Linux support |

| Know XPath, need Google Sheets output | Scraper (Chrome) | Direct Sheets export, unlimited free use |

| Need pre-built scrapers for popular platforms | Apify | Ready-to-run Actors, cloud-hosted |

Use the table above to match your situation to the right tool.

Start with Octoparse if you are a non-developer, its free plan is the most generous of any GUI tool in this list, and 600+ templates cover the majority of common scraping jobs. If you want to try AI features totally, Chat4Data or ParseHub are the next options. For developers, Scrapy handles everything at scale; Playwright handles JavaScript-heavy pages where Scrapy’s default HTTP requests return empty results.

Tips to Get the Most From a Free Web Scraper

- Check your free tier limit before starting.

Most tools cap free usage at 200–500 pages per month or 5 projects. Octoparse’s free plan is the exception — unlimited basic task runs. Match the tool’s limit to your workload before building a scraper. All tools in this list have pricing pages linked in the Quick Answer table above.

- Add request delays on busy sites.

Even no-code tools can trigger bot detection if they fire requests too quickly. Set a 1–3 second delay between requests in any tool that exposes this setting. Chat4Data navigates like a real browser by default, which avoids most basic bot detection without extra configuration.

- Use templates and saved tasks.

Octoparse’s 600+ templates and Chat4Data’s saved task reuse skip the configuration step for common targets. There is no reason to build a scraper from scratch for Amazon, LinkedIn, or Google Maps when a working template already exists.

- Know when to upgrade.

Free tools work well for research, one-off pulls, and light monitoring. When scraping becomes a daily business workflow like price tracking pipelines, continuous lead generation, automated data feeds, a paid plan’s reliability typically pays for itself in recovered time.

Why Octoparse Is the Best Free Web Scraper for Most People

If you are not a developer and you need structured data from the web, here is the case for starting with Octoparse specifically.

- The free plan is genuinely usable.

Unlimited basic task runs means you are not counting pages or projects. Every other GUI tool in this list caps the free plan significantly — 500 pages per month (Data Scraper), 5 projects (ParseHub), $5 in credits (Apify). Octoparse does not restrict the number of pages per run. See exactly what the free plan includes.

- 600+ templates remove the biggest barrier.

The hardest part of scraping is figuring out how to extract from an unfamiliar site. Octoparse’s template library covers social media (LinkedIn, Twitter, TikTok), lead generation (directories, contact lists), real estate (Zillow, property portals), e-commerce (Amazon, eBay, Shopify), jobs (Indeed, LinkedIn Jobs, Glassdoor), and maps (Google Maps). Many templates are free, and most users report reaching working data in under 10 minutes with one.

- AI auto-detection handles sites without templates.

For sites without a pre-built template, the AI auto-detection scans the page and configures extraction fields automatically. In our testing, this correctly mapped fields on 7 out of 10 standard e-commerce and directory pages on the first pass, no selectors, no XPath, no guesswork required.

- Data goes directly where you work.

CSV is the minimum. Octoparse also exports to Excel, JSON, Google Sheets, and directly into databases (MySQL, SQL Server, PostgreSQL) on applicable plans, which eliminates the manual import step for teams working in Sheets or running analytics pipelines. You can even connect Octoparse Mcp to your AI clients for scraping and further analysis.

Final Words

The right free web scraper comes down to three variables: technical skill, target site type, and volume needs.

Non-developers should start with Octoparse, the most complete free tier in this list and the only GUI tool combining AI auto-detection, cloud scheduling, IP rotation, and 600+ templates at no cost. For browser-based work without any installation, Data Scraper or Chat4Data get from URL to spreadsheet in under two minutes. Developers who need unlimited scale and full control should use Scrapy for large crawlers and Playwright for JavaScript-heavy pages.

➡ Start Scraping for Free Today!

FAQs about Free Website Scraper Tools

- What is the best free web scraper in 2026?

Octoparse is the top pick for most non-developers. It offers 600+ pre-built templates covering common sites, and AI auto-detection that configures extraction automatically. For browser-based jobs without any installation, Data Scraper is the fastest option for table-format data. For plain-English AI scraping, Chat4Data is the most accessible.

- Is it legal to use a free web scraper?

Scraping publicly accessible data is legal in most jurisdictions, but it depends on the target site’s Terms of Service and local data protection laws. The U.S. Ninth Circuit Court ruled in hiQ Labs v. LinkedIn (2022) that scraping public web data does not violate the Computer Fraud and Abuse Act. Always check a site’s robots.txt and Terms of Service before scraping, and never scrape personal data covered by GDPR or CCPA without a legal basis.

- What are the real limits of free web scraping tools?

Free plans typically restrict you to 500 pages/month (Data Scraper), 200 pages per run and 5 projects (ParseHub), $5 in monthly credits (Apify pricing), 100 API credits (ScrapingBot pricing), or 1 million tokens (Chat4Data). Open-source libraries (Scrapy, Playwright) have no usage caps but require you to manage proxies and infrastructure yourself.

- Can I export scraped data to Excel for free?

Yes. Octoparse, Chat4Data, Data Scraper, and ParseHub all export to CSV, which opens in Excel. Octoparse also exports directly to Excel format and Google Sheets. For one-click Excel output, Octoparse’s desktop app is the most direct free option.

- How do I avoid getting blocked when scraping?

Add a 1–3 second delay between requests, use a tool with IP rotation, and rotate your user-agent string. Octoparse includes in-built IP rotation. Chat4Data navigates like a real browser, which avoids most basic bot detection without extra configuration. Open-source tools require a third-party proxy service for serious anti-bot targets.

- What is a free AI web scraper?

An AI web scraper uses machine learning or large language models to identify data fields automatically, without requiring manual selector configuration. Octoparse’s AI auto-detection and Chat4Data’s natural language interface are both AI-powered free options. Chat4Data lets you scrape any public page by typing a plain English description, no selectors, no templates, no setup.