“Can you pull data from websites to Excel?”

You may have similar questions above when you want to download data from a website, as Excel is an easy and common tool for data collection and analysis. With Excel, you can easily accomplish simple tasks like sorting, filtering, and outlining data and making charts based on them. When the data are highly structured, we can even perform advanced data analysis using pivot and regression models in Excel.

However, it is an extremely tedious task if you collect data manually by repetitive typing, searching, copying, and pasting. To solve this problem, we list 3 different solutions to scrape websites to Excel easily and quickly.

(Feel free to use this infographic on your site, but please provide credit and a link back to our blog URL using the embed code below.)

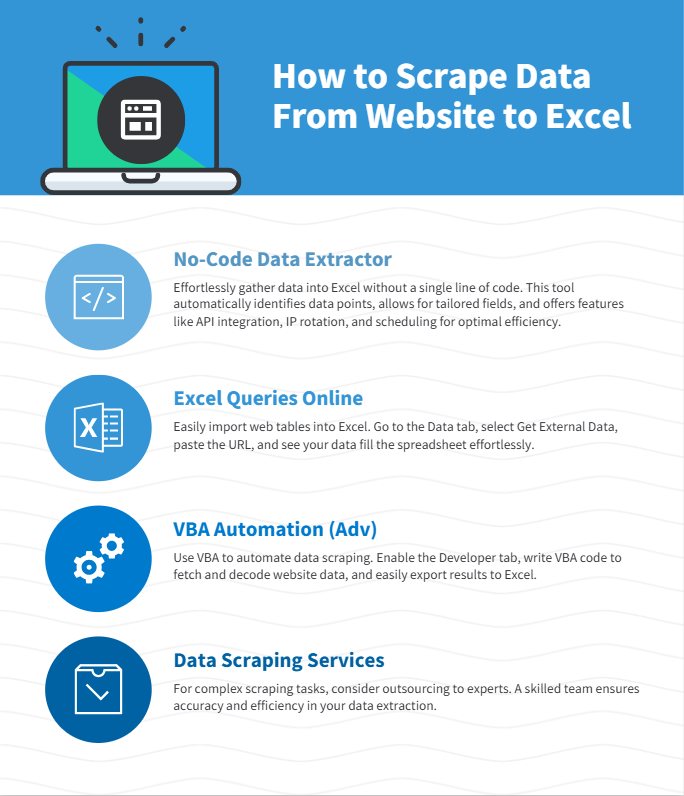

Method 1: No-Coding Crawler to Scrape Website to Excel

Web scraping is the most flexible way to get all kinds of data from webpages to Excel files. Many users feel hard because they have no idea about coding, however, an easy web scraping tool like Octoparse can help you scrape data from websites to Excel without any coding.

As an easy web scraper, Octoparse provides auto-detecting functions based on AI to extract data automatically. What you need to do is just check and make some modifications. What’s more, Octoparse has advanced functions like API access, IP rotation, cloud service, and scheduled scraping, etc. to help you get more data.

Turn website data into structured Excel, CSV, Google Sheets, and your database directly.

Scrape data easily with auto-detecting functions, no coding skills are required.

Preset scraping templates for hot websites to get data in clicks.

Never get blocked with IP proxies and advanced API.

Cloud service to schedule data scraping at any time you want.

Here is a video guide on how to extract data from any website to Excel, you can get some ideas after watching it. Or, you can follow the simple steps in the next parts to scrape website data into Excel without any coding.

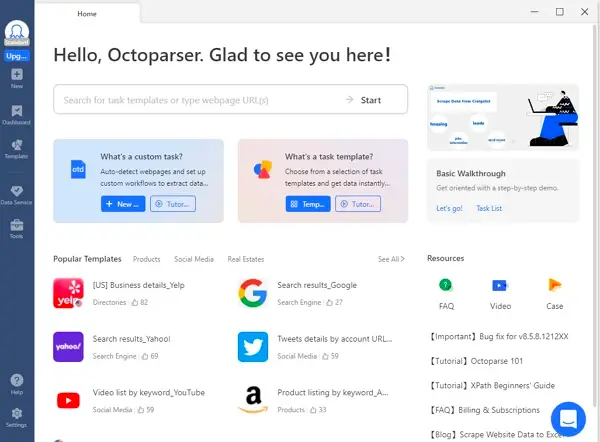

Online data scraping templates

You can also use the preset data scraping templates for popular sites like Amazon, eBay, LinkedIn, Google Maps, etc., to get the webpage data with several clicks. Try the online scraping template below without downloading any software to your devices.

https://www.octoparse.com/template/contact-details-scraper

3 steps to scrape data from website to Excel

Step 1: Paste target website URL to begin auto-detecting.

After downloading Octoparse and installing on your device quickly, you can paste the site link you want to scrape and Octoparse will start auto-detecting.

Step 2: Customize the data field you want to extract.

A workflow will be created after auto-detection. You can easily change the data field according to your needs. There will be a Tips panel and you can follow the hints it gives.

Step 3: Download scraped website data into Excel

Run the task after you have checked all data fields. You can download the scraped data quickly in Excel/CSV formats to your local device, or save them to a database.

Web scraping project customer service

If time is your most valuable asset, and you want to focus on your core businesses, outsourcing such complicated work to a proficient web scraping team that has experience and expertise might be the best option. Data scraping is difficult to scrape data from websites due to the fact that the presence of anti-scraping bots will restrain the practice of web scraping. A proficient web scraping team would help you get data from websites properly and deliver structured data to you in an Excel sheet, or in any format you need.

Here are some customer stories that how Octoparse web scraping service helps businesses of all sizes.

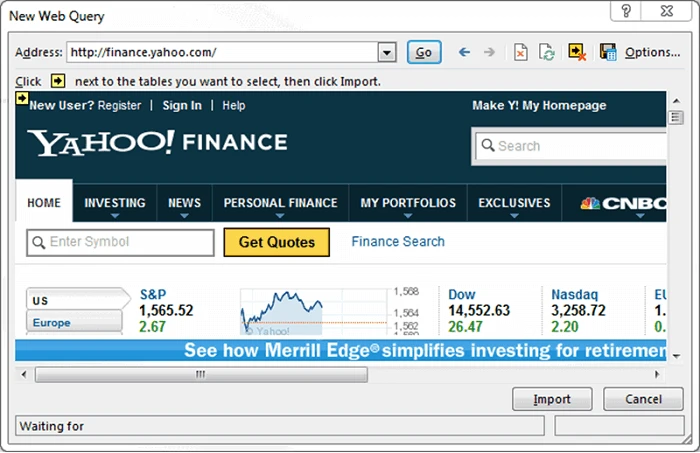

Method 2: Excel Web Queries to Scrape Website

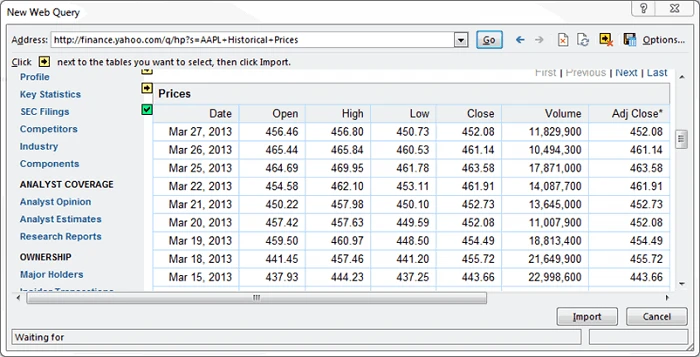

Except for transforming data from a web page manually by copying and pasting, Excel Web Queries allows you to pull tables directly from web pages into your spreadsheet. It can automatically detect tables embedded in the web page’s HTML.

If you’ve ever copied data from a website, pasted it into Excel, and spent ten minutes fixing the layout — this is the part you’ll love. Excel has a built-in feature called Web Queries (or From Web in newer versions) that does all that work for you.

Web Queries can also be used in situations where a standard ODBC (Open Database Connectivity) connection gets hard to create or maintain. You can directly scrape a table from any website using Excel Web Queries.

Overall it’s great for extracting simple, static tables without extra software.

How to Use Excel Web Queries

1. Open Excel:

Go to the Data tab on the ribbon → click Get Data → From Other Sources → From Web.

(In older versions, you’ll find it under Data → Get External Data → From Web.)

2. Enter the web page URL.

Paste the link of the page that contains the table you want to extract. Excel will analyze the HTML structure and show a list of all the tables it detects.

3. Pick your target table.

You’ll see small previews — simply click the one that looks right. If the site is properly structured, you’ll notice neat rows and columns ready to go.

4. Load it into Excel.

Once you hit Load, Excel imports the table straight into your worksheet — formatted, sortable, and ready for formulas.

And that’s it — no VBA, no add-ons, no scraping script.

Now you have the web data scraped into the Excel Worksheet – perfectly arranged in rows and columns as you like. Or you can check out from this link.

When to use Web Queries

- When data is in clean HTML tables

- When you want a quick way to grab data from simple sites

- When you prefer to stay inside Excel without extra tools

Keep in mind, Web Queries don’t handle websites that require logins, scrolling, or have complex layouts.

Method 3: Scrape Website with Excel VBA

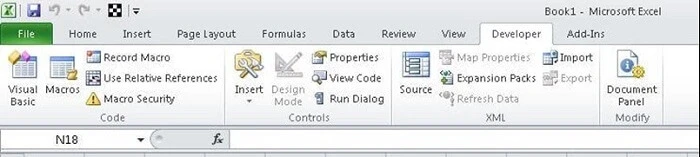

If you have some programming experience, Excel’s Visual Basic for Applications (VBA) allows you to write macros to scrape websites directly.

Most of us would use formula’s in Excel (e.g. =avg(…), =sum(…), =if(…), etc.) a lot, but are less familiar with the built-in language – Visual Basic for Application a.k.a VBA. It’s commonly known as “Macros” and such Excel files are saved as a **.xlsm.

Before using it, you need to first enable the Developer tab in the ribbon (right-click File -> Customize Ribbon -> check Developer tab). Then set up your layout. In this developer interface, you can write VBA code attached to various events. Click HERE to getting started with VBA in Excel 2010.

Using Excel VBA is going to be a bit technical – this is not very friendly for non-programmers among us. VBA works by running macros, step-by-step procedures written in Excel Visual Basic.

What VBA scraping can do:

- Send HTTP requests to download web pages

- Parse HTML to find and extract specific data

- Automate data imports for multiple pages or sites

- Export results immediately into Excel cells

How to Use Excel VBA to Scrape Website

Step 1: Open Excel

Press Alt + F11 to open the Visual Basic Editor.

In the left panel, right-click VBAProject → Insert → Module.

This is where your scraping script will live.

Step 2: Import the web libraries.

This allows you to interact with websites:

Step 3: Initialize your request objects.

Add this snippet to your module to declare variables for the XMLHTTP object and HTML document:

Step 4: Make a request to the target website.

Use XMLHTTP to make a GET request to the target URL and parse the response into an HTML document:

Step 5: Extract what you need.

Extract the needed data using DOM navigation/selectors, and export the scraped data to Excel.

Step 6: Clean up and repeat.

Clean up variables for memory management. Repeat the steps to scrape multiple pages if needed.

VBA takes a little setup, but once it’s running, you’re effectively turning Excel into a lightweight web scraper — ideal for pulling data from structured sites that don’t rely heavily on JavaScript.

Which Method Should You Choose?

| Method | Ease of Use | Best For | Requires Coding | Handles Complex Sites? |

|---|---|---|---|---|

| Octoparse (No Code) | Very Easy | Beginners, bulk scraping, complex | No | Yes |

| Excel Web Queries | Easy | Simple tables on public websites | No | Limited |

| Excel VBA Scripts | Moderate to Hard | Programmers, complex custom tasks | Yes | Yes |

Conclusion

Now, you have learned 3 different ways to pull data from any website to Excel. Choose the most suitable one according to your situation.

If you don’t have any coding skills, or you want to save time and energy on data collection work, a no-code web crawler like Octoparse is the fastest way to scrape any website without programming.

If you want to work fully inside Excel, web queries and VBA macros offer additional ways to import data.

Combining these methods with Excel’s powerful data tools, you can turn website data into insights that grow your business.

Common Questions About Scraping Website into Excel

1. Can I scrape any website data into Excel?

Mostly yes — as long as the data is publicly available. Excel can pull data from open web pages, APIs, and structured HTML tables.

However, always check the website’s robots.txt file and terms of use before scraping. Some sites restrict automated access, and ignoring that can lead to blocked requests or legal issues.

If you’re unsure, resources like Harvard’s “Ethical Web Scraping Guide” and DataCamp’s scraping ethics overview can help clarify what’s acceptable.

2. Which method is best for beginners?

If you’re just starting out, Octoparse is the easiest way to go. It’s a visual, no-code scraper — you simply click the elements you want, and it builds the workflow for you. No HTML, no VBA, no formulas.

Octoparse also exports directly to Excel or CSV, so it fits perfectly into an Excel-based workflow.

3. Can Excel Web Queries handle dynamic websites?

No, Web Queries work best with static HTML tables and simple pages.

4. Do I need to know programming for VBA scraping?

Yes, VBA requires basic knowledge of Excel macros and Visual Basic.

If you’re new, start small. Microsoft’s official VBA documentation explains every object and method clearly, and websites like AnalystCave’s VBA tutorials or Automate Excel offer easy, hands-on examples.

Once you’ve run a few scripts, you’ll realize VBA scraping is less about “coding” and more about telling Excel where to look, what to grab, and where to place it.