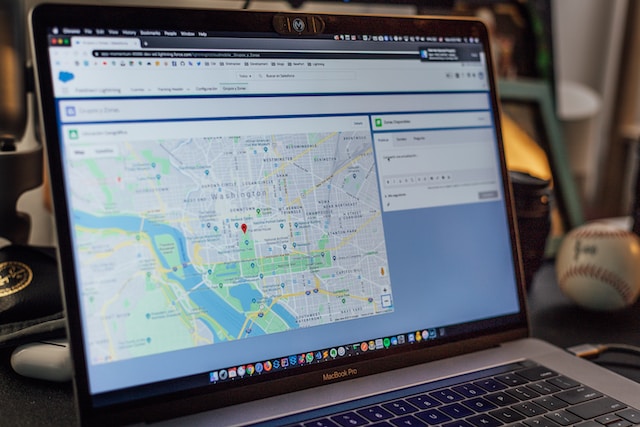

Google Maps isn’t just for directions — it’s a goldmine for local business data. Say you’re building a list of local shops or service providers—Google Maps lets you see valuable information for your business such as phone numbers, websites, addresses, hours, reviews, zip codes, latitude and longitude, etc.

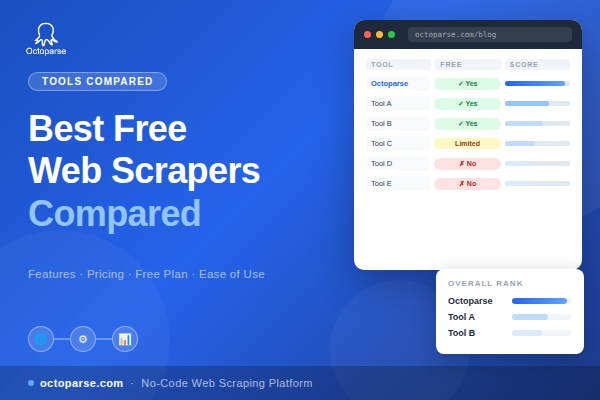

In this article, we will review 5 best Google Maps crawlers out there to help you scrape data from Google Maps easily and quickly. Both coding and no-coding methods are covered, including a free online Google Maps crawler you can start using right away.

5 Best Google Maps Scraper

1. Octoparse (Online Google Maps Crawler)

Free Google Maps Crawler

Octoparse, one of the best web scraping tool, can scrape google maps data without requiring any coding skills. With its auto-detecting function, you can easily build a workflow to extract Google Maps data, including locations, store names, phone numbers, reviews, open time, and other business information.

Advanced functions such as IP rotation, XPath, pagination, cloud service, etc. are also provided to help you customize the crawler and get the desired data.

Finally, you are also allowed to export all data into Google Sheets, Excel, CSV, or the database. Download Octoparse and follow the tutorial here to crawl Google Maps data.

Octaparse Google Maps Scraping Template

Octoparse offers a ready-to-use online template for Google Maps scraping. Just open it in your browser, enter a keyword or URL, and get a spreadsheet of business details—like names, phone numbers, addresses, websites, and ratings—in minutes. Try the Google Maps Scraper template below to start scraping instantly.

https://www.octoparse.com/template/google-maps-scraper-store-details-by-keyword

🥰Pros:

- No coding is required with the user-friendly interface.

- Allows for automated, scheduled scraping tasks.

- Offers cloud-based scraping options.

- Support data export formats: CSV, HTML, Excel, JSON, XML, Google Sheets, and databases (SqlServer and MySql).

- Support integration with other tools and services via the Open API.

- Support scraper customization.

- Handle different anti-scraping measures.

Video guide: Scrape Google Maps data

2. Google Maps API

Yes, the Google Maps Platform provides an Google Places API for developers! It’s one of the best ways to gather place data from Google Maps, and developers can get up-to-date information about millions of locations using HTTP requests via the API.

To use the Places API, you should first set up an account and create your API key. The Places API is not free and uses a pay-as-you-go pricing model.

Keep in mind, though, that the available data fields are limited, so it might not cover all the information you’re looking for.

🥰Pros:

- You can get up-to-date and accurate information.

- Allows developers to filter and sort place data based on specific criteria, such as type, location, and radius.

- Easy to integrate with various applications using straightforward API calls.

- Available for web, Android, and iOS.

🤯Cons:

- The price structure is complicated and the price is high for large-volume extraction.

- There are usage quotas and rate limits that can restrict the number of API calls you can make within a given time period.

- Requires setting up billing with Google Cloud.

3. Python Framework or Library

You can make use of powerful Python Frameworks or Libraries such as Scrapy and Beautiful Soup to customize your crawler and extract exactly the data you need.

Specifically,

- Scrapy is a framework that can download, clean, and store data from web pages, and has a lot of built-in code to save you time.

- In contrast, BeautifulSoup is a library that helps programmers quickly extract data from web pages.

Both Scrapy and Beautiful Soup require a reasonable to advanced level of programming knowledge to use effectively.

Scrapy is a powerful but complex web scraping framework with steep learning curves involving concepts like asynchronous requests, spiders, pipelines, and project structure. It is best suited for developers comfortable with Python and web scraping architectures.

Beautiful Soup is generally considered easier for beginners and quicker to start with, but it still demands familiarity with Python programming and HTML, especially since it only handles parsing and not crawling or browser automation. This means beginners must still write custom code to handle page navigation, requests, and data extraction, which can present a challenging barrier to entry for those new to programming or web scraping.

🥰Pros:

- Full control over the scraping process.

- Typically free of charge and open-source.

- Scale as Needed.

- Easy to integrate the scraper with other Python-based tools and services, such as data analysis libraries (Pandas, NumPy) and machine learning frameworks (TensorFlow, Scikit-learn).

- Directly interface with APIs and databases.

🤯Cons:

- Coding required.

- Time-consuming and requires ongoing maintenance.

- Have to deal with anti-scraping measures.

- May incur costs related to server infrastructure, proxies, and data storage solutions.

- Need to handle errors due to network errors, changes in HTML structure, and dynamic content loading.

4. Open-source Projects on GitHub

You can find several open-source Google Maps scrapers on GitHub. These projects can save you time, since much of the work is already done.

However, most still require basic coding skills to run, and setup can be tricky for non-developers. Many scripts are no longer maintained, and data output is often limited to plain text (.txt) files. If you’re aiming for large-scale or structured data extraction, this may not be the most reliable option.

🥰Pros:

- Free access and no subscription fee.

- Save time compared to developing a scraper from scratch.

🤯Cons:

- Requires programming knowledge.

- Not all projects are actively maintained, which can lead to issues if Google changes its site structure or anti-scraping measures.

- Using open-source code can pose security risks if the code contains vulnerabilities or malicious components.

5. webscraper.io Extension

The webscraper.io extension is the most popular web scraping tool for browsers. You don’t have to write codes or download software to scrape data, a Chrome extension will be enough for most cases.

Nevertheless, the extension is not that powerful when handling complex structures of web pages or scraping some heavy data.

🥰Pros:

- No code solution.

- No download. Fast setup time.

- Support scheduling and various export formats such as CSV, JSON, and Excel.

🤯Cons:

- less capable of handling large-scale scraping tasks.

- May struggle with sophisticated anti-scraping mechanisms.

- Need manual updates to scraping rules.

Conclusion

Now, you have a general idea about how to scrape data from Google Maps with these Google Maps crawlers. According to your own needs, select the most suitable one, whether it is cloud-based or desktop-based, coding or no-coding. Octoparse is always your best choice if you want to scrape Google Maps data easily and quickly.

Common Questions About Google Maps Scrapers

- What is Google Maps Scraper?

A Google Maps scraper is a tool that extracts business information from Google Maps, such as names, phone numbers, addresses, ratings, reviews, and more. This is especially useful for lead generation, market research, or building a business directory.

- How to add Google map location in Excel

The easiest way is to use a Google Maps data extractor like Octoparse. By simply entering an url, Octoparse will pull business data including names, coordinates, and addresses into a spreadsheet. Once the scraping is complete, you can export data directly to Excel in one click—no formatting or copy-pasting needed.

- What is the Best Free Google Maps Scraper?

Octoparse is one of the best free tools for scraping Google Maps, perfect for both beginners and experienced users. It provides an online template that lets you scrape business details from Google Maps without writing any code. Templates for Amazon, Twitter, email scraping, and more are also offered to help boost your business.