Airbnb is a popularly used site that facilitates travelers to look out for different types of accommodations like homes, beach houses, rentals, and more across the globe. Not only people who are traveling but also the home and other property owners can list their property on Airbnb for renting purposes.

As a simple traveler or a property lister, there are decent filters on site to search for your desired information. But, if you want to further collect and compare information about the rent, properties, amenities, and other factors for Airbnb data analysis, research, and other related tasks, you would need the help of an Airbnb scraper.

An Airbnb scraper tool will let you quickly scrap, process, and collect all the needed data from the site in a simple spreadsheet format that is easy to refer to, navigate, and use. In this article, you can learn the best tool to scrape Airbnb easily.

Is It Legal to Scrape Airbnb

The scraping of the data that is available and visible publicly on the web is legal, provided that specified rules and regulations are adhered to. These specifications may vary and become more stringent when it comes to extracting data that contains personal information that is Airbnb copyright-protected.

In general, the majority of online services including Airbnb prohibit extracting and scraping unauthorized data using automated agents, and this is termed a contract law. However, these laws may differ from country to country based on what are their policies regarding automated agents when it comes to unauthorized access to the system.

How to Scrape Airbnb Data Without Coding

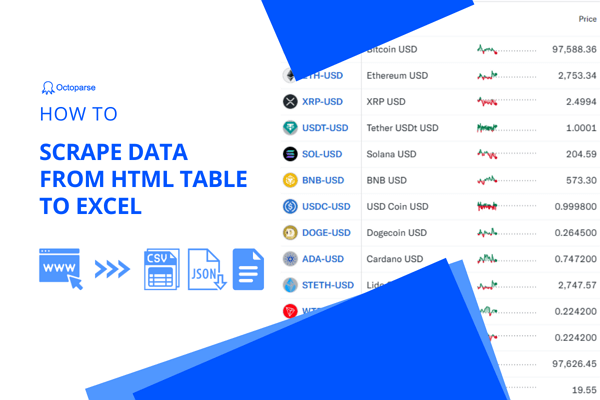

If you are looking for a method that can let you scrape Airbnb data without the complicated process of coding, then Octoparse is what you’re looking for. It can extract data from Airbnb easily and quickly. It works with Windows and Mac systems and has a user-friendly interface where you just need to follow a few simple steps through all the complicated processes. It also provides preset Airbnb templates to get data in Excel or other file formats with only a few clicks.

Steps to scrape Airbnb data using Octoparse

Step 1: Paste the target Airbnb page link

If you want to scrape the data by yourself rather than the preset templates, you can also finish the process easily. Just paste the Airbnb target link to the Octoparse main interface and click on the Start button, and the auto-detection process will begin.

Step 2: Customize workflow to extract desired Airbnb data

You can preview the data field after the quick auto-detecting, and create a workflow first. You can customize the details with loop items, XPath, pagination, etc. Make sure your workflow can meet your extraction needs.

Step 3: Export Airbnb data in Excel, CSV, and database

Now, click on the Run button in the right-top corner to begin your extracting process. It provides local device mode and cloud service. Just choose one as you need as both are very quick.

Just download Octoparse to have a free trial by following the steps above. If you still have any questions, please read this guide on scraping hotel details from Airbnb. What’s more, you can also scrape Booking.com with similar steps easily.

Preset Template – Scrape Airbnb without zero setups

Hundreds of preset templates are available on Octoparse, which can help you pull data from most mainstream websites without any setups. While scraping data using preset templates, you only need to enter several required parameters, and then Octoparse will take care of the rest and feed you the latest data. For Airbnb, three templates are worth giving a try. You can scrape Airbnb data by both keywords and URLs to get hotel names, prices, addresses, etc. If you need a closer look into Airbnb rooms, try Airbnb Room Details Scraper. It can pull room details, such as location, number of bedrooms, number of bathrooms, rating, etc., from Airbnb.

https://www.octoparse.com/template/airbnb-scraper-by-keyword

https://www.octoparse.com/template/airbnb-scraper-by-url

https://www.octoparse.com/template/airbnb-room-details-scraper

Scrape Airbnb Data with Python

If you have prior experience working with coding, then Python will also work as a great tool for scraping Airbnb data. Using Python, you can create customized coding for extracting the desired data from Airbnb.

While using the Python method, the programming language used will be Python, while the script will run on Selenium. The Selenium platform automates the browser and you can use it to scrape the data from the AJAX sites. While using the Selenium platform, Chrome can be automated to launch the pages on Airbnb and then to access the data, use its API.

Data scraping from Airbnb can be helpful in several ways. If you are good with coding and its technicalities, Python can be a good option. But if you wish to choose a much easier way, maybe Octoparse is worth having a free trial.