Zillow is one of the most popular websites used to search for homes, check home values, and find real estate agents. It also has a lot of data about local homes, their prices, and the realtors. That’s why scraping Zillow data is great to use in your tools and third-party applications for commercial real estate needs.

In the following part, you can learn about how to extract data from Zillow easily and quickly with a no-coding Zillow data scraper.

Can You Scrape Zillow Data

Scraping Zillow for real estate information is one of the latest trends in home buying, and it can give you a competitive edge if used wisely. Before jumping into Zillow, there are regulations that you need to follow when scraping that website so that you don’t violate any ethical or legal boundaries. Web scraping the available data on the screen when you search for houses in a geographical location isn’t illegal.

The information is available to anyone who has internet access and can view the website on a browser. Zillow has provided the use of its data also through its very own API. This facilitates one to commercially integrate with the Zillow group in the real estate business market. Usage of Zillow API is the best way to access the information commercially without violating the ethics and policies of Zillow.

The data you can scrape from Zillow contains information about the list of houses for sale in any city in their database which including addresses, the number of bedrooms, no of bathrooms, price, and many more. The extracted data can be exported in various formats like .csv, .txt, .xlsx, and your own database.

How to Scrape Zillow Without Coding

In this part, we will tell you how to scrape data from Zillow without any coding. The objective is to get details of the address, price, listing name, the assigned realtor, and all the information made available to view on the website. For this, we will be using the web scraping tool, Octoparse.

Octoparse is a popular web scraping tool that is widely used for scraping data on websites. It can automatically detect data like pictures, pages, and lists on web pages as soon as you open them on the in-built browser. With this, you can extract the data over multiple pages and download up to 10,000 links at once.

Octoparse also provides preset scraping template to get Zillow properties listing data within clicks. You can directly use the online Zillow data scraping template below by entering only a few parameters.

https://www.octoparse.com/template/zillow-scraper

3 steps to extract real estate data from Zillow easily

At the very beginning, you need to download and install Octoparse on your device, and sign up for a free account if you’re a new user. Then follow the simple steps below to start Zillow data scraping.

Step 1: Paste Zillow page link into Octoparse

Copy the URL you need to scrape from Zillow, and paste it into the search box of Octoparse. Click the Start button to enter the auto-detecting mode by default.

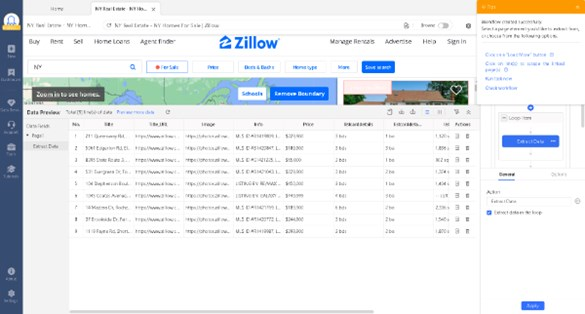

Step 2: Create a Zillow data crawler

Click on the Create Workflow button after the quick detection. You can make changes to the data fields with the prompts on the Tips panel.

Step 3: Extract and download data from Zillow

At the bottom of the screen, you can observe that the preview of data to be extracted is displayed in a table format. Check if it contains all the necessary data. If not, simply select the data you need, and it will be added to a new column. The field names can be renamed by selecting from the pre-defined list or entering them on your own.

Click the Run button to start the data extraction task on your device. Once the Task has completed, you can export it to your local system as per your desired format.

You can also use the similar steps to get data from another popular real estate sites like Realtor.com, or you can read the user guide about scraping Realtor data.

Zillow Web Scraping with Python

Zillow has some of the highest volumes of data among the top real estate listing websites. Scrape its data can be of very much use to realtors or others with a commercial interest in listings. Web scraping has been made easily achievable by various modules and methods built using python. Some of the packages that are used widely in the web scraping of data are Beautiful Soup, Mechanical Soup, Selenium, Scrapy, etc.

In the further part, we will use Beautiful Soup to scrape data from Zillow. Open any Python IDE. Run the following code. I have used PyCharm here.

The pandas data frame ‘df’ contains information about the listings present in Manhattan, New York. This program contains loops to extract the price and address of the listed houses. You can extend it by scraping other data like the number of bedrooms, bathrooms, etc. This can be saved as a CSV file locally onto your system using the function: df.to_csv(‘file_path’). Enter the path of the CSV file along with the file name in place of the file_path.

Final Thoughts

The digital real estate landscape is changing rapidly, with new services and tools emerging almost daily. Zillow has continued to grow significantly in recent years, becoming one of the largest real estate websites and services in the world. The company’s primary business model is centered around providing users with information about homes for sale, housing market trends, and other related data.

Now that you have learned the basics of how to web scrape data from it, the next step would be to generate ideas to put the scraped information to good use. Explore more of Octoparse features and other python web scraping methods to extend your grasp and fluency in gathering information using web scraping.