The information that is available on the web page can be crawled using a web crawler. To get the data in a fast yet simple way, many people would choose to buy the data directly.

It’s rather easy and cheap to collect static data. But as we all know, data from most websites, especially e-commerce and social media websites is continuously being updated. Even historical data that was considered high quality will inevitably become somewhat outdated.

Purchasing data directly from a third-party, such as a professional web crawling service can be very costly, though data delivered is usually more reliable and updated.

If your budget is limited, the best way for collecting data from websites is to build a web crawler on your own. You can always monitor the data from any website and collect the latest data from these dynamic websites if needed. Though it may take time to learn how to build a web crawler for a newbie, it is well worth the time and effort that you spend on it.

Obviously, you cannot become good and proficient in programming within a short period of time, but it’s possible to learn a versatile data extraction tool that can automatically scrape web data with a web crawler you built. Collecting web data with your own web crawler will not only lower the cost but also enable you to modify the web crawler any time as website changes.

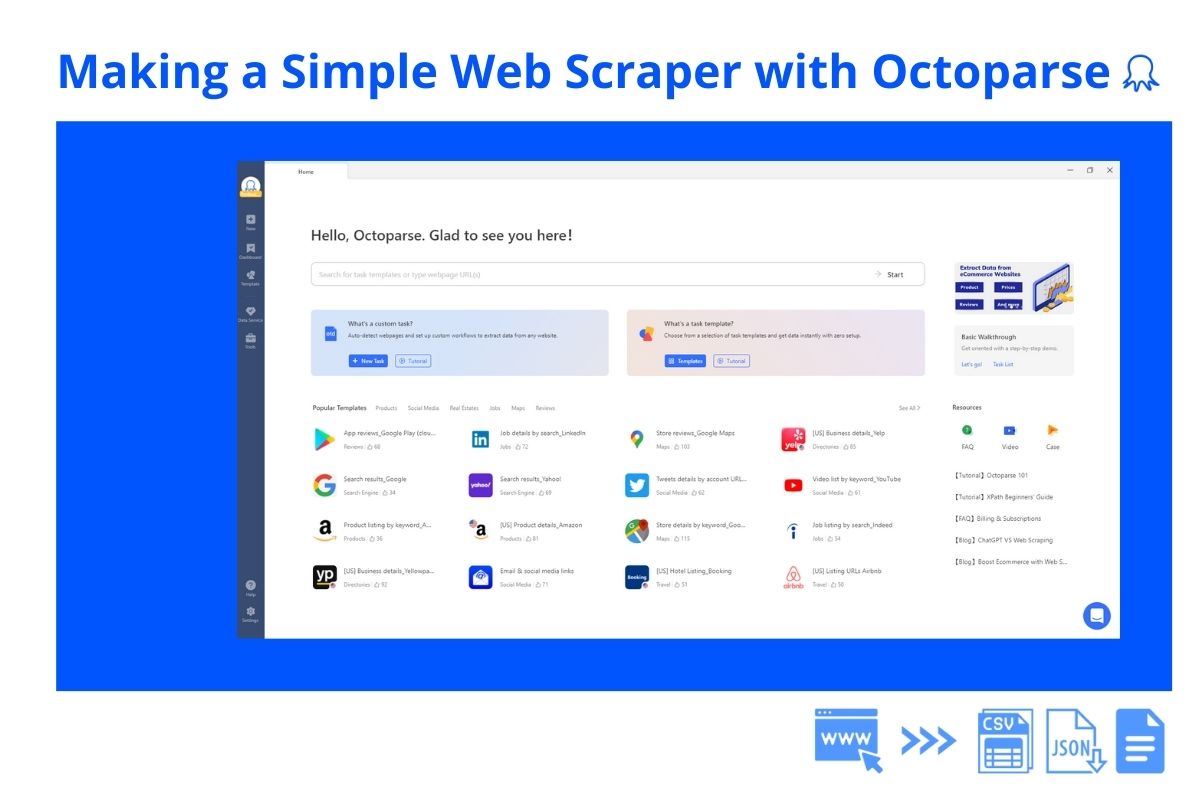

With Octoparse, it’s easy to build your own crawler.

I’ll go through an example of scraping data from octoparse.com. I’ll cover in detail how to extract data from multiple web pages using Octoparse.

After you launch Octoparse, open the URL (http://www.octoparse.com/cases-tutorial) in the built-in browser and the Workflow will add an action “Go To Web Page” automatically after you save the configuration.

Since we will be collecting data from all these web pages, we first need to add a pagination action. Click the “Next” button to create a pagination loop.

Then we will create a list to collect all the items from the web pages before scraping data from the detail pages.

Now we can extract data from each tutorial! Click on the data you would like to extract from the web page and the selected data will be saved in the “Extract Data” action.

We are done building a web crawler and it begins to scrape data now. Pretty easy, right?

You can modify the web crawler anytime and collect the latest data whenever you want. Besides, Octoparse provides a cloud service that allows your web crawlers to run in the cloud and get real-time data at a fast speed.

Overall, I hope this little example gave you an idea of what you can do with your web scraping projects. Of course, you can grab much more data from more complex websites with advanced features in Octoparse. Just take the first step, build one web crawler, and drop a message to the Octoparse support team if things don’t go your way. The free web crawler is easy to master and will work as expected.

Download Octoparse now. Happy scrapping!