If you’re looking for web scraping tools and come across this post with the headline confusing you, please take a deep breath. We’re not going to talk about the “beautiful soup recipe,” but rather the Beautiful Soup Python package, which is popular for web scraping.

You’ll get a fundamental overview of Beautiful Soup in this article. Then, we’ll discuss a number of Beautiful Soup alternatives, one of which doesn’t even involve any coding to scrape data from websites!

What is Beautiful Soup

Beautiful Soup is a Python library, to put it simply. In the actual world, a library is a collection of books that for future use. Not much has changed in the programming world, either. A Python library is a collection of codes or modules of codes that we can use in a program for specific operations. Beautiful Soup is one of these libraries which is designed as an HTML parser for quick turnaround projects like screen scraping.

To use Beautiful Soup to pull data out of HTML and XML files, you first need to download targeted pages using the Python requests library. After making a GET request to a web server, you’ll get the HTML contents of these pages. Then, you can import the Beautiful Soup library to parse pages with the codes of this library. There are methods like find_all, get_text, find, etc., to help you get the data you want.

Looking at these methods, you’ll find that they are very simple. Actually, this is one of Beautiful Soup’s greatest advantages. It gives users simple Pythonic idioms to extract data, reducing the amount of code needed to develop a scraper. In addition, it can automatically convert incoming documents to Unicode and outgoing documents to UTF-8. Users can not bother about encoding in most situations.

However, everything has its pros and cons. Beautiful Soup is not difficult to learn, but only fits better for small and simple projects. And it uses CSS selectors only so that you can just locate data in HTML files by moving forward but not backward.

3 Beautiful Soup Alternatives in Python

You must now have a general understanding of Beautiful Soup. Don’t worry if it turns out to not be the right tool for you; in this section, we’ll introduce three substitutes. These solutions might in some ways satisfy your demands.

lxml

lxml is one of the fastest and feature-rich Python libraries for processing XML and HTML. It can be used to create HTML documents, read existing documents, and find specific elements among HTML documents. Thus, many developers applied it into data scraping for better results. Unlike Beautiful Soup, it offers XPath to locate data besides CSS selector. Additionally, it’s more powerful and faster in parsing large documents.

parsel

Now we have another Python library called parsel. It’s basically a UX wrapper of lxml but streamlined for web scraping. Thus, it has similar functions to lxml to extract and remove data from HTML and XML using XPath and CSS selectors. Users can go one step further to use regular expressions in parsel to get data from a string of a selector.

html5lib

It is a Python library that is written in pure Python code for parsing HTML. It will read the HTML tree in a manner that is most similar to how a web browser would. As a result, it can split down nearly every element of an HTML document into separate tags and parts for a variety of use cases. Moreover, it can tackle broken HTML tags and add necessary tags to complete the structure. It also can be a Beautiful Soup backend, but has more powerful functions.

Scrapy is another popular way for web scraping, learn more by reading the article about Scrapy and its alternatives.

No-Coding Web Scraper Alternative to Beautiful Soup

When you’ve read up here, you might be curious that if you have no experience in coding, can you extract data from websites? The answer is YES! There are many no-coding web scrapers nowadays, and some of them can be alternatives to Beautiful Soup and Python libraries mentioned above.

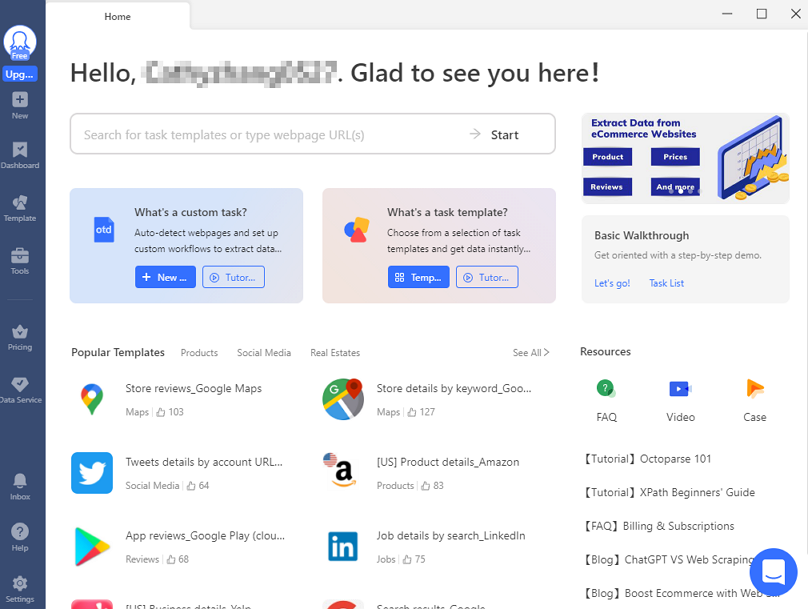

In this part, we’ll introduce how you can scrape data from webpages with an easy-to-use tool, Octoparse, with no coding required. If you have not used it before, please feel free to download and install it to your device (both Windows PC and MAC are available).

When you open Octoparse, you need to sign up for a free account to login. After logging into it, you can apply the powerful features in Octoparse to extract data. In general, you can easily get data by following the steps below.

Step 1: Create a task

Copy the URL of the targeted page you want to extract date from, and paste it into the search bar, then click “Start” to create a new task. After that, the page will be loading in Octoparse’s built-in browser in seconds.

Step 2: Auto-detect webpage data

Once the page has finished loading, click “Auto-detect webpage data” in the Tips panel. Next, Octoparse will scan the whole page and “guess” what data fields you want. It will highlight the detected data on the page for you to locate the data. You can also preview these data fields on the bottom and delete unwanted data.

Auto-detection feature might help you save a lot of time in selecting data. Additionally, Octoparse offers Xpath selectors just like some Python libraries do. You can use it to select data fields in Octoparse and get more accurate data. Especially when the structures of websites are complicated.

Step 3: Create the workflow

Make sure you’ve selected all the data fields you need, then click “Create workflow”. Next, take a glance at the right-hand side where you will see a workflow outlining each stage in the scraping procedure. Don’t forget to check if each step works properly to avoid getting no data when running the task. To check it, you can click on each step to preview the action.

Step 4: Run the task

After you’ve double-checked all the details, it’s time to launch the scraper! Click “Run” to start the extraction, and then Octoparse will hand over all the rest. Wait for the process to complete, then export the scraped data as an Excel, CSV, or JSON file.

Wrap-up

In this article, we’ve introduced Beautiful Soup and three alternative Python libraries for extracting data from HTML and XML files. You can also find more alternatives from top 10 open-source web scrapers. All of them are easy to use when you have basic knowledge of Python and coding. By contrast, Octoparse is more acceptable for everyone, regardless of coding experience. If you are looking for a no-code data scraping solution., it could be a good option.