Big data is a term that describes diverse and large sets of structured and unstructured data. This data is so voluminous and fast-paced that had made it hard to manage and extract with traditional data processing software.

Big data aims to solve questions that have not been answered before by leveraging new technologies such as artificial intelligence, machine learning, and more. For businesses, the data that inundates on a day-to-day basis are goldmines for new insights that take it to the next level.

Without a doubt, there are numerous ways to implement data collection which lays the foundation for all the work ahead. Each of the data collection approaches has its cons and pros but they all share something in common and if you are just about to kick it off it’s worthwhile to check them out.

In the following parts, you can learn the 5 steps explained how to collect big data, and the best data collection tool to help you gather big data without coding.

5 Steps to Collect Big Data

Raw and random data by itself is nothing of value. Messy data doesn’t tell us anything new or meaningful. Big data creates great value for businesses and enterprises by harnessing well-structured (ready to be analyzed by software), cleaned (unwanted parts are well-trimmed), and validated data.

Step 1: Gather data

There are many ways to gather data according to different purposes. For example, you can buy data from Data-as-Service companies or use a data collection tool to gather data from websites.

Step 2: Store data

After gathering the data, you can put the data into databases or storage for further processing. Usually, this step requires investment in physical servers as well as cloud services. Some data collection tools come with cloud storage after data is gathered, which greatly saves local resources and makes data easy to access from anywhere.

Step 3: Clean data

Data cleaning is important for effective data analytics. Since there may be noisy information you don’t need, you need to pick up the one that meets your needs. This step is to sort the data, including cleaning up, concatenating, and merging the data.

Step 4: Reorganize data

You need to reorganize the data after cleaning it up for further use. Usually, you need to turn the unstructured or semi-unstructured formats into structured formats like Hadoop and HDFS.

Step 5: Verify data

To make sure the data you get is right and makes sense, you need to verify the data. Test with samples of data to see whether it works. Make sure that you are in the right direction so you can apply these techniques to manage your source data.

Best Big Data Collection Tool

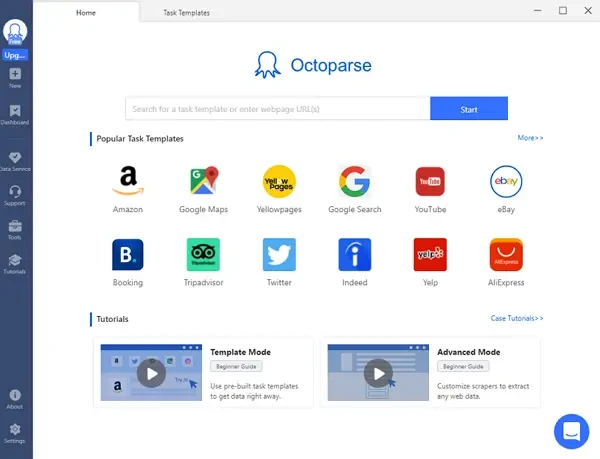

Above are the five general steps to collect the data required for big data analytics. However, collecting the data, analyzing it, and gleaning insights into markets is not an easy process if doing it without any assistance. So, it is better to use data collection tools like Octoparse, to assist us to obtain the data we want, it will make this process so much easier.

Most data collection tools can help with collecting a large amount of data within a short time, and they allow users to gather clean and structured data automatically, so there is no need to clean it up or reorganize it, especially Octoparse. It is a simple but powerful data collection tool that automates web data extraction, which allows you to create highly accurate extraction rules. Crawlers run in Octoparse are determined by the configured rule. The rules will guide Octoparse to get the data you want.

Octoparse has two extraction modes for extracting data. You can choose the online templates for popular sites to get data in clicks, or build a crawler by yourself without coding skills asked too. Advanced functions such as pagination, loop, IP rotation, and schedule scraping can also be found in Octoparse. You can export the scraped data in Excel, CSV, or Google Sheet files as you need.

Download Octoparse and sign up an account for free, and follow Octoparse user guide to start your data scraping easily. What’s more, you can try the online data scraping template below to get data much easier.