Rakuten is a Japanese eCommerce and online retailing company that grants an amazing cash back offer to its shoppers by spending a little amount on each purchase. As it is synced with numerous online stores, i.e, Walmart, NIKE, Best Buy, Target, and various other leading brands, its available products are really worth mentioning.

Being a hub of the electronic commerce world, Rakuten may boost the e-Commerce businesses for private and professional sellers worldwide. But it will only happen if you have enough info about it, like its product listings. So, in this case, if you scrape Rakuten product listings, you will acquire seamless data from this site.

This guide can assist you a lot as it has covered various dimensions of scraping Rakuten’s product listings, including its benefits, legacy, and the scraping process without coding. You will surely get amazing info by reading this guide.

Rakuten Web Scraping

A leading name in the E-commerce world, based in Tokyo – Rakuten is known as the ‘Amazon of Japan’. It opens a big door for its consumers to get coupons, promo codes, and enjoy huge discounts, offered by thousands of retailers. And the most favorable aspect is its cashback offer with online rebates by which you can save enough on each purchase.

Which data types can be scraped from Rakuten

Since Rakuten is highly vigorous with immense data of the world’s biggest online brands. Thus, there is utmost data available on this site that can be extracted via any reliable scraping tool or software. There are the data of almost all categories of products on Rakuten can be scraped.

- Product listings

- Product specification

- Product price

- Product image

- Seller prices with details

- Customer reviews

- Sales rank

So, when you move to scrape Rakuten, you will catch a big chunk of info and it will pave the way to nourish your business manifold.

Does Rakuten have any API

Rakuten owns some public APIs according to its different provided services, like eCommerce, flight reserves, recipes, etc. But most importantly, its eCommerce API provides huge information about listed products on Rakuten sites, shopping carts, and other functionality for use via developers and corporate enterprise teams.

In order to get access to its APIs, you are obliged to follow the actual steps given by the site. First, you have to explore the right API from various services provided by Rakuten, mainly eCommerce API (Ichiba). Then, you’ve to test the request parameters, and examine the data to be retrieved. After that, you must have an application ID to use its API. Thus, you will reach your target.

Major benefits of scraping Rakuten

There are a lot of benefits associated with scraping Rakuten. Although, a lot of data can be extracted from this online retail store. However, its product listings and citations also play a vital role to boost a ground-level e-Commerce business. Now, see the same major benefits of scraping this e-Commerce site.

1. Competitor Analysis

You will come to know about the whole product list, sales rank, and pricing info of your competitors to compete in a better way and stand out from them.

2. Market Research

You will understand about the resellers and competitors of your relevant market who are selling the same products that you can perform better.

3. Know your Customer

You can easily identify your customer by extracting the customer reviews of any particular products and getting to know about their intents and queries.

4. Online Retail Arbitrage

You can extract the product specification of the lowest price product in Excel or Google Spreadsheet and list it to another marketplace to get the best retail arbitrage.

5. Unauthorized Sales Channel Monitoring

As you extract the product listings, you will get a huge info about sellers who list below reseller price in order to determine parallel importing or replicas by the potential reseller.

How to Scrape Rakuten Without Coding

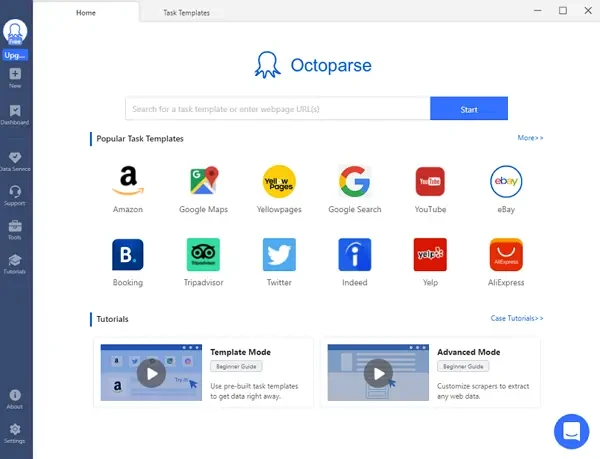

When you have to scrape Rakuten, you must need a more reliable web scraping tool that is easy to use and trustworthy. Though, various software are available on the internet but no one will be more useful than Octoparse, which provides its web scraping service without any hassle of coding. Moreover, it’s one of the leading scraping tool that provides its users preset templates to scrape Rakuten.

Steps to extract data from Rakuten with Octoparse

Step 1: Enter a Rakuten page link for scraping

Firstly, open Octoparse after you have downloaded and installed. Then enter the target URL of Rakuten site to the search bar and click on the Start button to open the webpage by auto-detecting.

Step 2: Select the data fields and create a workflow

You can see the scraped data in the preview section after the auto-detection. Create a workflow and make changes by the Tips panel to get all the data you want to scrape from Rakuten.

Step 3: Start extracting data from Rakuten

If you have nothing to change, then you can click on the Run button to start scraping data from Rakuten page. After a while, you can download the scraped data in Excel or any other format.

Rakuten online data scraping template

There is a much easier way to extract data from Rakuten, which is using the online data scraping templates provided by Octoparse. With the online Rakuten data scraping template, you can easily scrape product information like prices and stores from Rakuten. Have a try from the link below.

https://www.octoparse.com/template/rakuten-product-scraper

Wrap-up

Among the world well-known eCommerce stores, Rakuten stands in the top queue. Although it’s a Japanese site, its business growth has expanded over time. Due to being a big fish of the retailer business, its product listings matter a lot. So, there is an immense need to scrape Rakuten site data to nourish your e-store. And for this, Octoparse is proved to be a very helpful tool that performs web scraping jobs without coding. This guide has covered all the necessary info about why you need to scrape Rakuten and how to do it.