What Is Job Aggregator

A job aggregator is a platform that gathers job opportunities from job boards, career pages of companies and institutions, and any other places where job openings will be posted. It works like a search engine for jobs – as you enter a keyword concerning a location, position, or job skill, it will filter through the present job database and show relevant vacancies that fit your description.

For Employers

Job aggregators help employers expose jobs to more candidates. Some of them also provide customized company pages for branding, position highlight and other services. In fact, job aggregators also serve as a data center for companies to get information about their competitors – their staffing situation, ongoing projects, financial budget, and even more if you probe deep into it.

For Job-seekers

Getting informed of the latest vacancies is just one of the benefits. Job-seekers can learn better about the company they are interested in through reviews from prior interviewees or employees. In addition, you may be able to pay for a report about your competitiveness against present contenders.

How Do Job Aggregators Make Money

A job aggregator is gathering job data and presents it in a certain way. As the business grows mature, a job aggregator may take the role of a contractor to do the whole hiring for a company.

We list several general ways about how they make money.

1. Subscription with pricing tiers: The number of listings to post, the number of resume downloads. That’s what Monster and ZipRecruiter are doing.

2. Company Branding: Add-on features on the company page, promotion of the page.

3. Hiring Events: Activity marketing, applicant screening, scheduling and coordination throughout the procedure. If you want to know more about this business model, Indeed is a nice choice to learn from.

4. Sponsored Postings: Top ranking in the search results (eg. PPC model).

5. Notifications: Instant notifications when a suitable candidate enters the pool.

6. Competitiveness Analysis (for applicants): The number of contenders for the same position, comparative strengths and weaknesses.

There are more variants. The key is to increase the probability for employers and candidates to meet and lower the cost of it.

How to Build A Job Aggregator

We’ll introduce the methods with 3 suggestions. Take the helpful content for you to create a job aggregator.

Zero in on a Niche Market

Finding your edge is the key to surviving in a fierce competition. Chances of success are slim if you don’t differentiate your service from giants like Glassdoor and Indeed. No matter in which industry, there are always many similar products or let’s say “service” in the marketplace. How can we attract customers and make them choose us? Therefore, an important point is to differentiate us from others in the market. To achieve that, it is better to use some tools to assist us.

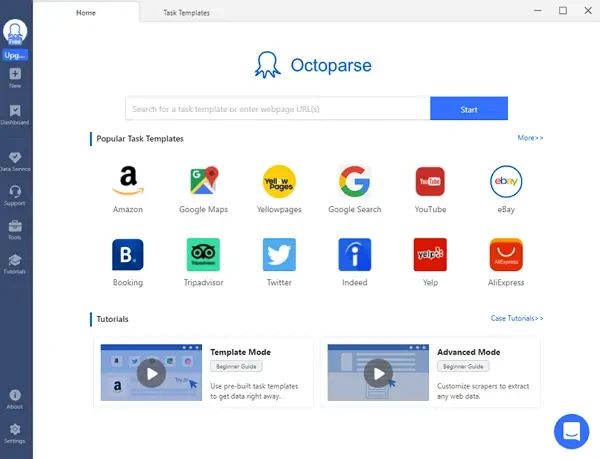

Octoparse offers desktop tool and data scraping services to multiple job aggregator runners (or put it simply, we can help scrape job postings to fuel their sites). It is a powerful web scraper with comprehensive features, both available for Mac and Windows users. Octoparse can extract not only the job data but also other eCommerce, news data, etc. You can analyze the data in the form of Excel/HTML/CSV and choose the right market strategy for your business at fingertips.

For job data, it can give you lots of valuable insights into the job market. By using Octoparse, it’s ok if you have no clue about programming, as they have a special auto-detection feature that auto-targets data for you. Moreover, Octoparse has built-in web scraping templates. All you need to do is to choose a template that can help to get the target data and fill in required information, then wait for the scraper to scrape the data for you. On the other hand, it also offers data services. It has a professional data team to help with solving data problems. Talk to our data expert if you have the need of web scraping and are interested in more possibilities.

Now, let’s see how to use Octoparse “Task Template” to scrape job data in Glassdoor as an example.

Step One: Find the scraper (Glassdoor Job Information)

Click the “+” sign to create the task template and you will see many templates. Find the Glassdoor template under the “Job” tab which is named “Glassdoor Job Information” – that is the one we are going to use.

Step Two: Enter the parameters into your scraper

Now, tell the scraper what shall do for you. There are two blanks you are required to fill here:

Keyword – The job title or any keyword you want to scrape, ie. Video editor

Location – The location would like to search, ie. Seattle

Input parameters into the scraper and click the “Save & Run” button to launch your scraper.

Step Three: Run the scraper and export the data when it is completed

This template can be run both in the Cloud and locally. After scraping all the data, you will be able to export the extracted data to all kinds of formats like Excel, CSV, JSON, and HTML. Alternatively, you can also export the data to your database or data visualization tools via Octoparse APIs.

Before coming up with a good idea, it’s natural for people to think that the market is saturated or that big names have taken up all the market share. That’s why our clients stand out in the market – they are good at finding the niche.

You may build a job aggregator to serve companies and job-seekers in a particular industry, that is, to be industry-based. Areas requiring high expertise such as finance, healthcare, IT are nice places to start. Or you could build a job aggregator for small cities where people may be short of this job-seeking/staffing service.

Be Resourceful

Once you figure out who your target users are, you need to get what they want to attract them. And this is a vital step to get traction. Scrape a large amount of high-quality job postings to your site and make sure they fit your niche.

One of the job boards we are serving focuses on local small cities. The team hunts through the Internet and gets to every possible site where local job opportunities are posted. To be resourceful, they scrap from hundreds of sites, getting valuable information from every nook and cranny. However, difficulties exist. Some of the sites adopt anti-scraping techniques. Millions of records may cause overload and low speed in scraping. Inconvenience downloads and uploads from the scraping tool to the database. Unstructured data. These are very practical issues and have happened to most of our job board clients.

In order to get around these obstacles, Octoparse developed functions like automatic IP rotation, cloud scraping and API connection, etc. These are important guarantees to the smooth and successful scraping across tons of sites. If you have great sources at hand but the above issues got you stuck, we are happy to help.

Keep Updating

Recruitment process is always time-limited. If you can offer job openings and hiring information ahead of other job boards, you are doing good for your users.

Companies may not hire the best candidate on the market. Instead, they welcome those who come at the right timing. Make sure your job aggregator is keeping track of the updates of your sources. Be the first one to notify job-seekers: hey, here comes this opportunity, don’t miss it. Octoparse offers schedule scraping, incremental scraping and Cloud to help our client meet this end. Scheduled tasks can run automatically once you set the clock. Incremental scraping will escape the records collected before and only target those newly updated. This means a lot for your storage space and data arrangement! Of course if you use Cloud, forget about the space issue. Our servers will take care of the data for you.

API connection benefits them in another way – save time of switching back and forth from the Client and their database. You can just access data, manage tasks in your own system.

With a powerful web scraping tool, job postings aggregation is realizable. If you are confident with your idea of tapping into the gap of the market, you should try and build your own job aggregator.