Web crawling (also known as web data extraction, and web scraping) has been broadly applied in many fields today. A web crawler, also known as a spider or bot, is an automated program or script that systematically browses the web to collect and index information from websites.

Now, web crawling technology is developing fast, and it is widely used in many industries to improve working efficiency. There are many web crawling tools you can choose even though you know nothing about coding.

In this article, you can learn the top 20 web crawlers based on desktop devices, browser extensions, or other cloud services. Both paid and free web crawlers are included.

How Can a Web Crawler Help

Before we get started, you can learn the key points that a web crawler can help you.

- No more repetitive work of copying and pasting.

- Get well-structured data not limited to Excel, HTML, and CSV.

- Time-saving and cost-efficient.

- It is the cure for marketers, online sellers, journalists, YouTubers, researchers, and many others who are lacking technical skills.

Learn more use cases and industries that web scraping and crawling technologies can help with.

Top 20 Web Crawlers You Cannot Miss

7 Web Crawler for Windows/Mac

1. Octoparse – Free

Octoparse is a free web crawling tool based on Windows and macOS systems to get web data into spreadsheets easily. With the AI-based auto-detecting function and the user-friendly point-and-click interface, you can use Octoparse smoothly without any coding skills. It also provides preset data scraping templates for popular websites so that you can extract data with a few parameters only.

https://www.octoparse.com/template/email-social-media-scraper

What’s more, Octoparse also has advanced functions to meet more scraping needs. For example, you can schedule your data scraping at any time you want with the cloud scraping service. IP rotation and CAPTCHA bypass are also available to avoid being blocked during the data crawling process.

Turn website data into structured Excel, CSV, Google Sheets, and your database directly.

Scrape data easily with auto-detecting functions, no coding skills are required.

Preset scraping templates for hot websites to get data in clicks.

Never get blocked with IP proxies and advanced API.

Cloud service to schedule data scraping at any time you want.

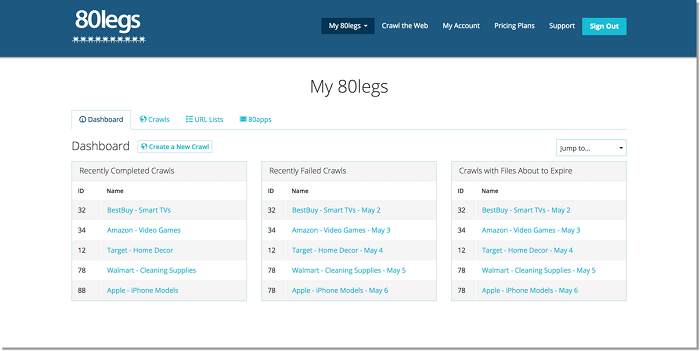

2. 80legs

80legs is a powerful web crawling tool that can be configured based on customized requirements. It provides powerful crawling capabilities for businesses, researchers, and developers looking to automate data extraction tasks. 80legs offers a flexible, scalable solution for collecting structured data and supports tasks such as content aggregation, market analysis, and competitive research.

Main features of 80legs:

- API: 80legs offers API for users to create crawlers, manage data, and more.

- Scraper customization: 80legs’ JS-based app framework enables users to configure web crawls with customized behaviors.

- IP servers: A collection of IP addresses is used in web scraping requests.

3. ParseHub

Parsehub is a web crawler that collects data from websites using AJAX technology, JavaScript, cookies, etc. Its machine-learning technology can read, analyze and then transform web documents into relevant data. With ParseHub, users can quickly create custom web crawlers to collect data for market research, price comparison, and more.

Parsehub main features:

- Visual Interface: Easy-to-use, no coding required.

- Customizable Workflows: Tailor crawlers to specific data needs.

- Export Options: Export data to formats like CSV, Excel, or JSON.

4. Visual Scraper

Visual Scraper is designed to easily extract structured data from websites through a visual interface. It allows users to create custom scraping workflows by simply selecting elements on a webpage, making it ideal for non-technical users. Visual Scraper supports scraping content from dynamic websites and can handle complex data extraction tasks, such as navigating multiple pages or interacting with forms.

Features for Visual Scraper:

- Various data formats: Excel, CSV, MS Access, MySQL, MSSQL, XML, or JSON.

- Support for Dynamic Content: Extract data from JavaScript-driven websites.

- Flexible Data Extraction: Scrape text, images, links, and other elements.

5. WebHarvy

WebHarvy is an intuitive point-and-click web scraping tool designed for non-technical users. It automatically identifies patterns in web pages and extracts data such as product details, images, and text from multiple websites. WebHarvy is particularly useful for e-commerce, real estate, and price comparison websites, offering an easy way to collect data without writing any code.

WebHarvy important features:

- Scrape Text, Images, URLs & Emails from websites.

- Proxy support enables anonymous crawling and prevents being blocked by web servers.

- Data format: XML, CSV, JSON, or TSV file. Users can also export the scraped data to an SQL database.

6. Sequentum

Content Grabber (now Sequentum) is a web crawling tool targeted at enterprises. It allows you to create stand-alone web crawling agents. Users are allowed to use C# or VB.NET to debug or write scripts to control the crawling process programming. It can extract content from almost any website and save it as structured data in a format of your choice.

Features of Content Grabber:

- Integration with third-party data analytics or reporting applications.

- Powerful scripting editing, and debugging interfaces.

- Data formats: Excel reports, XML, CSV, and to most databases.

7. Helium Scraper

Helium Scraper is a visual web data crawling software for users to crawl web data. There is a 10-day trial available for new users to get started and once you are satisfied with how it works, with a one-time purchase you can use the software for a lifetime. Basically, it could satisfy users’ crawling needs at an elementary level.

Helium Scraper main features:

- Data format: Export data to CSV, Excel, XML, JSON, or SQLite.

- Fast extraction: Options to block images or unwanted web requests.

- Proxy rotation.

3 Website Downloader

8. Cyotek WebCopy

Cyotek WebCopy is illustrative like its name. It’s a free website crawler that allows you to copy partial or full websites locally into your hard disk for offline reference. You can change its setting to tell the bot how you want to crawl. Besides that, you can also configure domain aliases, user agent strings, default documents, and more.

However, WebCopy does not include a virtual DOM or any form of JavaScript parsing. If a website makes heavy use of JavaScript to operate, it’s more likely WebCopy will not be able to make a true copy. Chances are, it will not correctly handle dynamic website layouts due to the heavy use of JavaScript.

9. HTTrack

As a website crawler freeware, HTTrack provides functions well suited for downloading an entire website to your PC. It has versions available for Windows, Linux, Sun Solaris, and other Unix systems, which covers most users. It is interesting that HTTrack can mirror one site, or more than one site together (with shared links). You can decide the number of connections to open concurrently while downloading web pages under “set options”. You can get photos, files, and HTML code from its mirrored website and resume interrupted downloads.

In addition, Proxy support is available within HTTrack for maximizing the speed. HTTrack works as a command-line program, or through a shell for both private (capture) or professional (online web mirror) use. With that saying, HTTrack should be preferred and used more by people with advanced programming skills.

10. Getleft

Getleft is a free and easy-to-use website grabber. It allows you to download an entire website or any single web page. After you launch Getleft, you can enter a URL and choose the files you want to download before it gets started. While it goes, it changes all the links for local browsing. Additionally, it offers multilingual support. Now, Getleft supports 14 languages! However, it only provides limited Ftp supports, it will download the files but not recursively.

On the whole, Getleft should satisfy users’ basic crawling needs without more complex tactical skills.

2 Web Crawler Extensions

11. Scraper

Scraper is a Chrome extension with limited data extraction features but it’s helpful for making online research. It also allows exporting the data to Google Spreadsheets. This tool is intended for beginners and experts. You can easily copy the data to the clipboard or store it in the spreadsheets using OAuth. Scraper can auto-generate XPaths for defining URLs to crawl. It doesn’t offer all-inclusive crawling services, but most people don’t need to tackle messy configurations anyway.

12. OutWit Hub

OutWit Hub is a Firefox add-on with dozens of data extraction features to simplify your web searches. This web crawler tool can browse through pages and store the extracted information in a proper format.

OutWit Hub offers a single interface for scraping tiny or huge amounts of data per need. OutWit Hub allows you to scrape any web page from the browser itself. It even can create automatic agents to extract data.

It is one of the simplest web scraping tools, which is free to use and offers you the convenience to extract web data without writing a single line of code.

5 Recommended Web Scraping Services

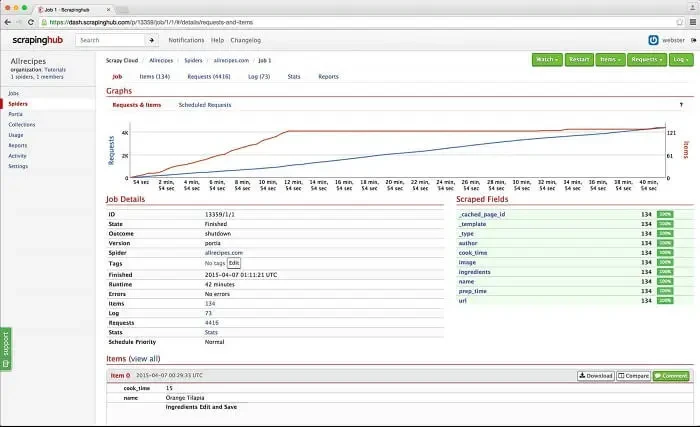

13. Zyte

Zyte (the old Scrapinghub) is a cloud-based data extraction tool that helps thousands of developers to fetch valuable data. Its open-source visual scraping tool allows users to scrape websites without any programming knowledge.

Scrapinghub uses Crawlera, a smart proxy rotator that supports bypassing bot counter-measures to crawl huge or bot-protected sites easily. It enables users to crawl from multiple IPs and locations without the pain of proxy management through a simple HTTP API.

Scrapinghub converts the entire web page into organized content. Its team of experts is available for help in case its crawl builder can’t work to your requirements.

14. Dexi.io

As a browser-based web crawler, Dexi.io allows you to scrape data based on your browser from any website and provide three types of robots for you to create a scraping task – Extractor, Crawler, and Pipes. The freeware provides anonymous web proxy servers for your web scraping and your extracted data will be hosted on Dexi.io’s servers for two weeks before the data is archived, or you can directly export the extracted data to JSON or CSV files. It offers paid services to meet your needs for getting real-time data.

15. Webhose.io

Webhose.io enables users to get real-time data by crawling online sources from all over the world into various, clean formats. This web crawler enables you to crawl data and further extract keywords in different languages using multiple filters covering a wide array of sources.

And you can save the scraped data in XML, JSON, and RSS formats. And users are allowed to access the history data from its Archive. Plus, webhose.io supports at most 80 languages with its crawling data results. And users can easily index and search the structured data crawled by Webhose.io.

On the whole, Webhose.io could satisfy users’ elementary crawling requirements.

16. Import. io

Users are able to form their own datasets by simply importing the data from a particular web page and exporting the data to CSV.

You can easily scrape thousands of web pages in minutes without writing a single line of code and build 1000+ APIs based on your requirements. Public APIs have provided powerful and flexible capabilities to control Import.io programmatically and gain automated access to the data, Import.io has made crawling easier by integrating web data into your own app or website with just a few clicks.

To better serve users’ crawling requirements, it also offers a free app for Windows, Mac OS X, and Linux to build data extractors and crawlers, download data and sync with the online account. Plus, users are able to schedule crawling tasks weekly, daily, or hourly.

17. Spinn3r

Spinn3r allows you to fetch entire data from blogs, news & social media sites, and RSS & ATOM feeds. Spinn3r is distributed with a Firehouse API that manages 95% of the indexing work. It offers advanced spam protection, which removes spam and inappropriate language use, thus improving data safety.

Spinn3r indexes content similarly to Google and save the extracted data in JSON files. The web scraper constantly scans the web and finds updates from multiple sources to get you real-time publications. Its admin console lets you control crawls and full-text search allows making complex queries on raw data.

RPA Tool of Web Scraping

18. UiPath

UiPath is a robotic process automation software for free web scraping. It automates web and desktop data crawling out of most third-party Apps. You can install the robotic process automation software if you run it on Windows. Uipath is able to extract tabular and pattern-based data across multiple web pages.

Uipath provides built-in tools for further crawling. This method is very effective when dealing with complex UIs. The Screen Scraping Tool can handle both individual text elements, groups of text, and blocks of text, such as data extraction in table format.

Plus, no programming is needed to create intelligent web agents, but the .NET hacker inside you will have complete control over the data.

2 Library Methods for Programmers

19. Scrapy

Scrapy is an open-sourced framework that runs on Python. The library offers a ready-to-use structure for programmers to customize a web crawler and extract data from the web on a large scale. With Scrapy, you will enjoy flexibility in configuring a scraper that meets your needs, for example, to define exactly what data you are extracting, how it is cleaned, and in what format it will be exported.

On the other hand, you will face multiple challenges along the web scraping process and take efforts to maintain it. With that said, you may start with some real practice data scraping with Python.

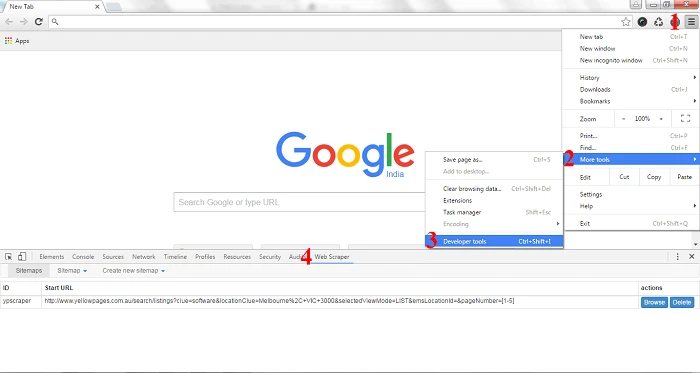

20. Puppeteer

Puppeteer is a Node library developed by Google. It provides an API for programmers to control Chrome or Chromium over the DevTools Protocol and enables programmers to build a web scraping tool with Puppeteer and Node.js. If you are a new starter in programming, you may spend some time to read their tutorials.

Besides web scraping, Puppeteer is also used to:

- Get screenshots or PDFs of web pages.

- Automate form submission/data input.

- Create a tool for automatic testing.

What Makes a Good Web Crawler

After learning so many web crawlers, you also need to know how to choose a web crawler to meet your needs. Here are some factors that make a good web crawler, you can take for reference.

- Efficiency and Speed: Ability to handle large volumes of data quickly without overloading servers.

- Scalability: Can handle growing data extraction needs as your project expands.

- Error Handling: Gracefully handles errors like broken links or server downtime without interruption.

- Support for Dynamic Content: Can extract data from sites with JavaScript, AJAX, and SPAs.

- CAPTCHA and Proxy Handling: Can bypass CAPTCHAs and rotate IP addresses to avoid IP blocking.

- Customization: Provides flexibility to define specific scraping rules, such as data fields and crawl depth.

- Ease of Use: User-friendly interfaces or no-code options for non-technical users.

- Data Quality and Accuracy: Ensures clean and structured data extraction with minimal errors.

Final Words

In conclusion, selecting the right web crawling tool depends on your specific data extraction needs, whether you’re looking for ease of use, customization, or scalability. The best 20 web crawling tools listed above offer diverse solutions that cater to different use cases, from small projects to large-scale data scraping tasks.

While each tool has its strengths, it’s essential to consider factors such as data handling capabilities, user interface, and integration options when making your choice. By using the right web crawler, you can streamline your data collection process, unlock valuable insights, and drive better business decisions.

If you know nothing about coding or want to save time and money on web crawling, Octoparse is your best choice. With it, you can simply build a web crawler and extract data from any website you want. Download Octoparse and sign up a free account to start your data scraping now.