Can web scraping be done in Google Sheets? You may also have the same question as Google Sheets almost become one of the most popular cloud-based tools.

Google Sheets can be regarded as a basic web scraper. You can use a special formula to extract data from websites, import the data directly to Google Sheets, and share it with your friends.

By reading the following parts, you can learn the easy methods to scrape data from websites with Google Sheets, and its best alternative to extract web data without any coding skills.

3 Methods of Google Sheets Web Scraping

Method 1: Using ImportXML in Google Spreadsheets

ImportXML is a function in Google Spreadsheets that allows you to import data from XML and HTML documents on the web. With ImportXML, you can extract specific data from a webpage by specifying an XPath query to locate the data you want. Here are the simple steps:

Step 1: Open a new Google Sheet, then navigate to the website you want to scrape using Google Chrome. Right-click on the web page and select “Inspect” to activate the Selector tool.

Step 2: Copy and paste the URL of the webpage into the Google Sheet. In the cell where you want the scraped data to appear, type the formula “=IMPORTXML(URL, XPath expression)” and replace “URL” with the URL of the webpage, and “XPath expression” with the XPath of the element you want to scrape.

Step 3: Once the formula is entered, the data from the selected element on the webpage will be automatically scraped and displayed in the corresponding cell in the Google Sheet.

Method 2: Grab price data with ImportXML Formula

This method can be used to extract price data from a single element on a webpage.

Step 1: Select the price element on the webpage and right-click to bring up the drop-down menu. Then, select “Copy” and choose “Copy XPath” to copy the XPath of the element.

Step 2: Type the formula “=IMPORTXML(URL, XPath expression)” into the Google Sheet. The “Xpath expression” is the one we just copied from Chrome. Replace the double quotation mark “ within the XPath expression with a single quotation mark ‘.

Step 3: After entering the formula, the price data will be automatically scraped and displayed in the Google Sheet.

Method 3: Another formula to get data with Google Sheets

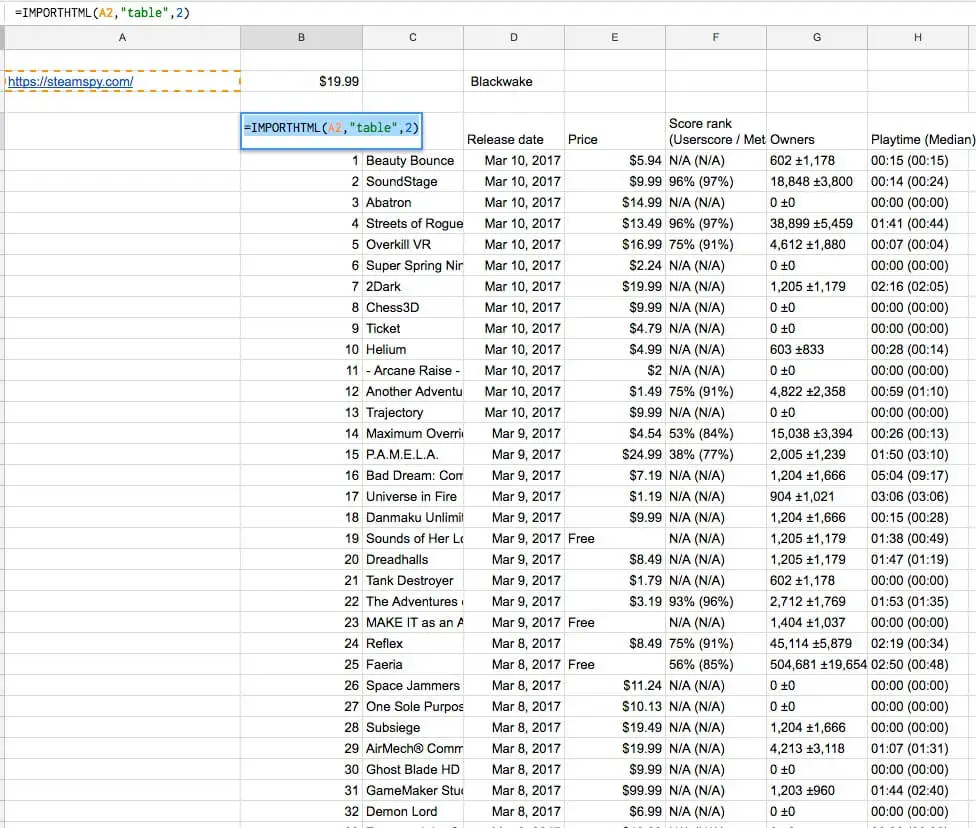

There’s another formula we can use. It allows you to extract an entire table from a webpage and import it directly into a Google Sheet.

To use the ImportHTML function, type the formula “=IMPORTHTML(URL, QUERY, Index)” into the Google Sheet, replacing “URL” with the URL of the webpage, “QUERY” with the table query you want to extract, and “Index” with the index of the table on the webpage.

Once the formula is entered, the entire table will be automatically scraped and displayed in the corresponding cells in the Google Sheet.

Google Sheets Alternative: Scraping Data Without Coding

Now, let’s see how the same scraping task can be accomplished easily with the best web scraping tool, Octoparse. It allows you to extract more data from websites than Google Sheets, and you don’t need to learn coding skills as it has auto-detecting mode. Octoparse works on both Windows and Mac devices, download it and follow the steps below to enjoy.

A video about web scraping with Octoparse

Steps to Scrape Web Data with Google Sheets Web Scraping Alternative

Step 1: Open Octoparse after the quick installation, build a new task by choosing “+Task” under the “Advanced Mode”.

Step 2: Choose your preferred Task Group. Then enter the target website URL, and click “Save URL”. In this case: the Game Sale website https://steamspy.com/

Step 3: Notice Game Sale website is displayed within Octoparse interactive view section. We need to create a loop list to make Octoparse go through the listings.

1. Click one table row (it could be any file within the table) Octoparse then detects similar items and highlights them in red.

2. We need to extract by rows, so choose “TR” (Table Row) from the control panel.

3. After one row has been selected, choose the “Select all sub-element” command from the Action Tips panel. Choose the “Select All” command to select all rows from the table.

Step 4: Choose “Extract data in the loop” to extract the data.

You can export the data to Excel, CSV, TXT, or other desired formats. Whereas the spreadsheet needs you to physically copy and paste, Octoparse automates the process. In addition, Octoparse has more control over dynamic websites with AJAX or reCaptcha.

Reading Octoparse Help Center if you still have any questions on scraping website data. If you’re looking for a data service for your project, Octoparse data service is a good choice. We work closely with you to understand your data requirements and make sure we deliver what you desire. Talk to Octoparse data expert now to discuss how web scraping services can help you maximize efforts.